Best Practices for automated vs manual testing: Boost Quality and Speed

The real debate isn't automated vs manual testing; it's about how to blend them. At its core, the difference is simple: automation uses scripts for repetitive, high-volume checks, while manual testing leans on human insight for nuanced, user-focused validation. The trick isn’t picking a side but building a smart, hybrid strategy that gets you the best of both worlds.

Understanding the Foundations of Software Testing

In software development, both automated and manual testing have the same end goal: ship a high-quality, bug-free product. They just take different paths to get there, and each approach brings something unique to the table. Figuring out their distinct roles is the first step toward a QA strategy that actually works.

Manual testing is exactly what it sounds like—a hands-on process where a QA analyst acts like an end-user, meticulously clicking through the application to find what’s broken. It's all about that human touch.

Manual testing shines where human intuition is everything. It's the only way to genuinely check the user experience, visual design, and the overall 'feel' of an application—things a script could never measure.

On the other side, automated testing involves writing scripts that tell software tools to run tests without anyone needing to be there. This approach is built for efficiency and scale, especially for tasks you have to do over and over again.

Core Distinctions at a Glance

The best teams don't see this as a fight between automated and manual testing. They see them as two sides of the same coin. Automation is your safety net for the predictable, high-risk parts of your app, while manual testing explores the weird, unpredictable paths a real user might take.

Here's a quick look at how they stack up.

Key Differences Between Automated and Manual Testing

This table breaks down the fundamental characteristics of each approach, showing where one excels and the other might fall short.

| Attribute | Automated Testing | Manual Testing |

|---|---|---|

| Execution | Performed by scripts and tools | Performed by human testers |

| Ideal Use Case | Regression, performance, load tests | Usability, exploratory, ad-hoc tests |

| Initial Cost | High (tools, script development) | Low (primarily tester time) |

| Long-Term Cost | Low (reusable scripts) | High (linear, scales with effort) |

| Speed | Very fast, runs 24/7 | Slow, limited by human pace |

| Accuracy | Highly consistent, no human error | Prone to human error, variable |

| Human Element | Lacks intuition and judgment | Strong in creativity and feedback |

This side-by-side view makes it clear they solve different problems.

A hybrid model lets development teams move fast without shipping junk. Automation handles the boring, repetitive checks needed in a CI/CD pipeline, making sure new code doesn’t break old features.

This frees up your human testers to focus their valuable time on the creative, exploratory work—finding those subtle bugs and usability quirks that scripts will always miss.

Speed, Scalability, and Accuracy: Where the Real Differences Emerge

When you pit automated vs manual testing against each other, the conversation always comes back to three things: speed, scalability, and accuracy. These aren't just technical benchmarks; they're the factors that determine how fast you can ship and how much your users can trust your product. Let's break down where each approach shines—and where it falls apart.

Manual testing moves at a human speed. A sharp QA analyst can probably get through a couple of dozen test cases in a day, but that’s it. They’re limited by focus, fatigue, and the physical act of clicking through an interface. This slow, deliberate pace is fantastic for exploratory testing where you need creativity, but it’s a massive bottleneck for anything repetitive.

Automation, on the other hand, operates at machine speed. What takes a human days to check can be done in minutes or hours by a script. This completely changes the feedback loop for developers, turning a multi-day wait into near-instant validation.

The Unmatched Speed of Automation

The most obvious win for automation is pure, brute-force speed. Automated scripts can tear through thousands of test cases overnight and have a full report ready before your team has even had their morning coffee. For any team running a CI/CD pipeline, this kind of rapid validation isn't just nice—it's non-negotiable.

Here’s a real-world example: a full regression suite might take 11.6 days of straight manual work. Run that same suite with 100 parallel automated executions, and the time plummets to just 1.43 hours. That's a staggering 64x speed boost. This becomes even more critical when developers are using AI coding assistants. A tool like kluster.ai can help generate and verify code in seconds, but that benefit evaporates if the testing cycle that follows takes days. The State of Testing Report has some great data on how these benchmarks are evolving across the industry.

Scaling Tests Across Every Configuration Imaginable

Scalability is where the gap between manual and automated testing becomes a chasm. Manual testing just doesn't scale well. To double your test output, you have to double your team and your time. It’s a linear equation that makes it financially and logistically impossible to manually verify an app on hundreds of different browser versions, operating systems, and device types.

Automation scales exponentially. Using cloud-based testing grids, you can run a single test suite across countless environments at the same time. This parallel execution gives you coverage that you could never dream of achieving manually.

Automation transforms testing from a linear, time-consuming task into a parallel, on-demand process. It’s the difference between building a single car by hand and running an entire assembly line.

This isn’t just about making life easier; it's about managing risk. It ensures the feature that works perfectly on the latest Chrome for macOS doesn't completely break for a user on an older version of Firefox for Windows.

Robots Don't Get Tired: Ensuring Consistent and Accurate Results

Speed and scalability are about getting things done faster, but accuracy is about building trust in your product. Humans, no matter how skilled or careful, make mistakes. They get tired, misinterpret a requirement, or accidentally skip a step after doing the same check for the hundredth time. This human element introduces variability, which means bugs can slip through.

Automated tests are the opposite. They are perfectly consistent. They run the exact same steps, with the exact same data, every single time, without fail. This robotic precision removes human error from the equation for repetitive tasks. If an automated test fails, you know it's a real problem with the code, not a mistake in the testing process.

This level of accuracy provides a reliable foundation for quality. It’s why high-stakes industries rely so heavily on it—the finance sector, for example, reports automation coverage as high as 67.1%. In environments like that, consistency isn't a luxury; it's a requirement for compliance and survival. The automated vs manual testing debate isn't just about efficiency; it's a fundamental business decision.

When to Choose Automation or Manual Testing

The whole automated vs. manual testing debate isn't about picking a winner. It's about picking the right tool for the job. Get this wrong, and you're looking at wasted time, blown deadlines, and buggy software. A smart strategy means knowing exactly where each approach shines.

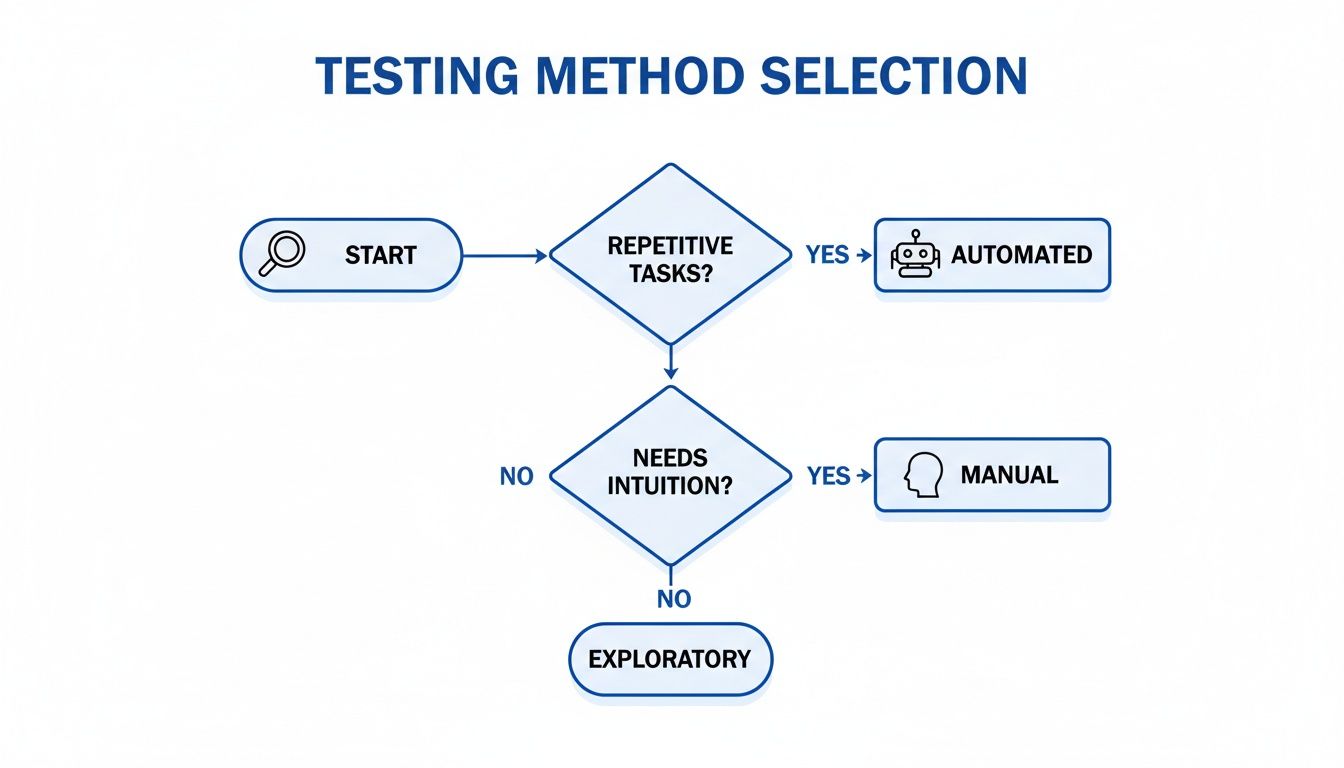

This decision tree gives you a simple way to think about it. Is the task repetitive? Or does it need a human brain?

The flowchart gets to the heart of the trade-off. If you need consistency and repetition, automation is your workhorse. If you need subjective judgment or creative exploration, you need a person.

Ideal Scenarios for Test Automation

Automation absolutely thrives on predictability. It's the undisputed champ for any task that has to be done the exact same way over and over again. You eliminate human error and get feedback loops that are ridiculously fast. It’s perfect for stable parts of your app where the UI isn't constantly changing.

Here are the prime use cases for automation:

- Regression Testing: This is the bread and butter of automation and where you'll see the biggest ROI. Automation makes sure your new code didn't accidentally break something that used to work, running thousands of checks flawlessly after every single build.

- Performance and Load Testing: Trying to simulate thousands of people hitting your app at once is just impossible for a human team. Automation scripts can generate that massive load to pinpoint exactly where things slow down or break under stress.

- Data-Driven Testing: Need to test the same feature with a huge set of different inputs? Think about checking a login form with hundreds of username and password combos. Automation is the only way to do this without losing your mind.

- API Testing: Checking API endpoints involves sending a request and making sure you get the right response back. It’s a precise, repetitive task that's tailor-made for automation. Scripts can verify status codes, response bodies, and headers way faster than any person could.

An engineering manager might set up an automated suite to validate every API endpoint after each code push. This ensures the core backend is solid before a human tester even looks at the UI, freeing up your team to focus on trickier, user-facing scenarios.

Where Manual Testing Remains Irreplaceable

Automation is great for the grunt work, but it has zero intuition, creativity, or empathy. That's where manual testing comes in. It's essential for looking at the parts of your application that directly affect the user experience—the stuff where a gut feeling or subjective feedback is everything.

Automation can tell you if a button works, but only a human can tell you if the button is in the right place, if it's intuitive to use, or if its color scheme is visually jarring.

Manual testing is still the king for:

- Exploratory Testing: This is where testers go off-script. They get to freely poke around the application, using their curiosity and experience to find weird edge cases and unexpected bugs that a rigid script would cruise right past.

- Usability Testing: Figuring out how intuitive and user-friendly an app feels requires a real person. Automation has its strengths, but you have to know when manual methods are non-negotiable, especially for understanding the user's perspective by manually conducting usability testing.

- Ad-Hoc Testing: Sometimes you just need a quick, informal check to make sure a specific bug fix worked or a tiny feature change didn't break anything. A quick manual test is way more efficient than writing a whole new automation script for it.

Think about launching a new user onboarding flow. A manual tester does more than just see if the "Next" button works. They'll judge if the instructions are clear, if the whole process feels natural, and if it's a welcoming experience for someone new. Those are insights you just can't get from a script.

Digging Into the Cost and ROI of Each Approach

Sooner or later, the conversation around automated versus manual testing always comes down to money. At first glance, the math seems simple. Manual testing looks cheap upfront because you're just paying for people's time. Automation, on the other hand, requires a pretty hefty initial spend on tools, infrastructure, and engineers who know what they're doing.

But that surface-level view is misleading. It completely misses the long-term value and the real return on your investment (ROI).

Manual testing costs are painfully linear. As your app gets bigger and more complex, you need more testers and more hours to keep up. It's a direct, escalating line item on your budget that never goes away. Every single regression cycle, every new feature, and every platform you add to your support list just piles on more recurring costs. That model just doesn't scale, and it eventually becomes a massive bottleneck.

Automation is different. It’s a front-loaded investment that pays compounding returns. Yes, the initial costs are higher, but once that test suite is built, you can run it a thousand times for virtually nothing. That completely flips the economic equation of quality assurance on its head.

The Upfront Costs of Automation

Setting up a solid automation framework is more than just buying a software license. The real investment is in the skilled engineers you need to design, build, and maintain test scripts that don’t break every five minutes.

Here's where the initial money goes:

- Tooling and Infrastructure: This includes licenses for your automation software and setting up the cloud or on-premise hardware needed to run tests in parallel.

- Script Development: This is the big one. It’s all the engineering hours it takes to write that first batch of automated tests.

- Training and Expertise: You need to get your team up to speed on the new tools and teach them how to write tests that are actually maintainable and reliable down the road.

These costs are very real, but think of them as a one-time capital expense that creates a lasting asset for your company.

Calculating the Long-Term ROI

The real ROI from automation snaps into focus once you look past that initial setup phase. Automation delivers its value by changing how your entire development team works, slashing your time-to-market, and boosting product quality in ways that directly pad your bottom line.

Automation’s true ROI isn't just about cutting QA headcount; it's about letting the entire engineering org move faster, innovate more, and ship better products with confidence.

One of the biggest financial wins comes from catching bugs way earlier in the development cycle. A defect that makes it to production can cost up to 100 times more to fix than one you catch during development. Automated tests running on every single commit are your safety net, stopping those expensive bugs from ever seeing the light of day.

This early detection creates a massive ripple effect. The data backs this up: cost savings and ROI are the main reasons people adopt automation. In fact, in nearly half of all implementations, test automation is already replacing over 50% of manual testing efforts. That shift directly cuts labor costs and lets teams scale without having to hire a massive QA army. You can dive deeper into these trends by checking out some recent software testing statistics.

A Data-Backed Business Case

At the end of the day, choosing between automated and manual testing is a strategic business decision. Manual testing might seem cheaper day-to-day, but its costs just keep stacking up forever. Automation demands a real investment, but it delivers an ROI that just keeps growing.

Think about the business outcomes:

- Faster Revenue Generation: When you can shrink release cycles from weeks to days, you get new features to your customers faster. That means you start making money from those features sooner.

- Improved Developer Productivity: Automation takes all the repetitive, boring checks off your developers' plates. That frees them up to focus on what they do best: innovating and building new things instead of chasing down regressions.

- Enhanced Brand Reputation: Fewer bugs in production mean a better user experience, happier customers, and a stronger brand. Those are priceless long-term assets.

For any engineering manager, the business case is crystal clear. When you connect your automation investment to these tangible results, you can easily justify the initial spend and show everyone how a modern testing strategy directly drives business growth and profitability.

How to Build a Hybrid Testing Strategy

The real debate isn't about choosing automated vs. manual testing. It's about building a smart strategy where they work together. A solid hybrid model uses automation for its relentless speed and manual testing for its irreplaceable human insight, creating a quality net that catches everything. This blend is absolutely essential for modern Agile and DevOps teams, where you can't afford to sacrifice quality for speed.

Crafting this strategy starts with deciding what to automate. Let's be clear: you can't—and shouldn't—automate everything. The trick is to prioritize the tests that give you the biggest wins right away, creating a strong foundation to build on.

Defining Your Automation Scope

Your first move should be to target the tests that are the most tedious to perform by hand and offer the highest return on investment. It's a pragmatic approach that delivers immediate value.

Your initial automation hit list should include:

- Regression Suite: These tests are non-negotiable for automation. They confirm that your new code hasn't torpedoed existing features. Since they're repetitive and have to run with every single release, automating them frees up a massive amount of time.

- Smoke Tests: Think of these as quick health checks. They verify that the absolute most critical functions of your app are still standing after a new build. Automating them gives you an instant "go/no-go" signal to decide if further testing is even worth it.

- Data-Driven Tests: Any test that needs to be run with tons of different data sets is a perfect candidate for automation. Think of a login form that needs to be checked with valid, invalid, and edge-case credentials. Automation handles this exhaustive work without the mind-numbing manual effort.

As you build out this hybrid strategy, integrating powerful API testing tools can be a game-changer for your automation efforts. This helps you establish a fast and reliable feedback loop for the very backbone of your application.

Clarifying Roles in a Shared Quality Model

In a true hybrid model, quality becomes everyone's job, not just something you toss over the wall to the QA team. Developers and QA engineers need clearly defined roles that complement each other, building a culture where the whole team owns the quality of the final product.

Here’s a practical way to divide the work:

- Developers: They own unit and integration testing. By writing automated tests for their own code, they catch bugs at the source, ensuring components work as designed before anything gets merged. It’s the first line of defense.

- QA Engineers: They take the lead on building and maintaining the broader, end-to-end automated regression suite. More importantly, this frees them up to focus on high-value manual work like exploratory testing, usability assessments, and digging into complex user scenarios.

This setup lets each person play to their strengths, leading to a much more efficient and effective quality process. For a closer look, our guide on the role of test automation in quality assurance breaks down how to structure these teams for success.

Embracing a Shift-Left Mindset

A truly successful hybrid strategy is built on a "shift-left" mindset. This just means pulling testing activities earlier into the development lifecycle. Instead of treating quality as a final checkpoint before release, it's woven into every stage, from the first design mockups to the final deployment. Automation is what makes this possible by providing a constant stream of feedback right from the start.

Human oversight is the critical counterbalance to automation. It ensures you’re not just building the product right, but also building the right product—one that users will find intuitive and valuable.

While automated scripts are great at checking if the software meets the requirements, it's manual exploratory testing that uncovers the unexpected. This is where a QA engineer’s creativity and deep product knowledge shine, allowing them to find bizarre edge cases and usability quirks that no script could ever predict. This human touch is irreplaceable; it's what separates a functional product from one that's truly a delight to use.

How AI Is Modernizing Software Testing

The old lines between automated and manual testing are starting to fade, and it's all thanks to artificial intelligence. AI isn't just another tool in the box; it represents a fundamental shift in how we think about quality, turning it from a reactive cleanup process into a preventative discipline. This change moves quality checks out of a separate QA stage and puts them right inside the developer's workflow.

New AI-native quality tools are now operating directly inside a developer's Integrated Development Environment (IDE). Platforms like kluster.ai provide instant, context-aware code reviews as the code is being written, creating a powerful new preventative layer. They're designed to catch logic flaws and security vulnerabilities before the code even gets close to a formal testing environment.

This proactive approach makes everything that comes after—both automated and manual testing—way more efficient. Instead of chasing down simple code defects, QA teams can finally focus their expertise on the complex, system-level validation that truly matters.

Creating a Proactive Quality Layer

The real magic of in-IDE AI is its ability to stop bugs at the source. It essentially acts as an intelligent pair programmer, constantly verifying code against your project's standards and security policies in real-time. This simple step dramatically cuts down on the number of defects that ever make it into the CI/CD pipeline.

By catching bugs right in the IDE, AI shifts quality from a downstream process to an upstream activity. This frees up QA teams to concentrate on system integrity and user experience, not just fixing preventable errors.

This preventative model fits perfectly with the strengths of traditional testing methods. We all know that automated testing excels at consistency and accuracy, eliminating the human error that can trip up even the most experienced QA professionals. With AI catching the initial logic errors, automation suites run cleaner and focus on what they do best: regression and integration checks. The data backs this up—large organizations with over 10,000 employees already have an 81.7% test automation adoption rate, proving its reliability at scale.

Accelerating the Entire Development Lifecycle

When developers commit cleaner, pre-vetted code, the whole development lifecycle speeds up. Automated tests pass more often, which means fewer build failures and frustrating delays. This, in turn, allows QA engineers to dedicate their valuable manual testing time to high-impact exploratory scenarios and nuanced usability assessments—the kinds of things that still require genuine human intuition.

The result is a more collaborative and efficient workflow. Developers, empowered by AI for code analysis, produce higher-quality work from the very beginning. It's this synergy between preventative AI, precise automation, and insightful manual testing that enables teams to ship better software, faster.

Got Questions? We've Got Answers

When you're trying to figure out the right mix of automated and manual testing, a lot of practical questions come up. Let's tackle some of the most common ones.

Can Automation Completely Replace Manual Testing?

The short answer? No. It's the wrong way to think about it.

Automation is a powerhouse for repetitive, predictable tasks—think regression tests or load testing where you're just hammering the system. But it has zero human intuition. It can’t tell you if a new design feels clunky or if a user workflow is confusing.

That’s where people shine. You need a human tester for exploratory testing, usability checks, and anything that requires subjective judgment. The best QA strategies treat automation and manual testing as a partnership. Automation handles the grunt work, freeing up your team to focus on the nuanced, user-focused validation that makes a product great.

The goal isn't replacement; it's collaboration. Automation confirms the application works as specified, while manual testing ensures it works well for the people using it. This distinction is key to a mature testing mindset.

What Should I Automate First?

Go straight for your regression suite. This is almost always the biggest win right out of the gate. These are the tests you have to run over and over again to make sure new code doesn't break old features. Automating them gives you an immediate return by saving a ton of time every single release.

Once your regression tests are running smoothly on their own, here's what to target next:

- Smoke Tests: Set up automated checks for the absolute must-work parts of your app. This gives you a fast "go/no-go" signal on any new build.

- Data-Driven Tests: Any test that needs to run with dozens of different data inputs is a perfect candidate. Think of a login form you need to test with 50 different user credentials. That's a job for a script, not a person.

How Does a Hybrid Approach Work in Agile?

In an Agile sprint, a hybrid model is all about running things in parallel. Your automated tests should be hooked directly into your CI/CD pipeline, running automatically with every single commit. This gives developers instant feedback on regression and integration issues without anyone lifting a finger.

At the same time, your QA engineers are manually testing the new features being built in that sprint. They're doing exploratory testing, trying to break things in creative ways, and checking for weird edge cases a script would never think of. This dual-track approach gives you the best of both worlds: the speed of automation and the deep, contextual quality that only a human can provide.

Stop shipping bugs from AI-generated code. kluster.ai provides instant, in-IDE code reviews to catch logic errors and security flaws before they ever reach your repository. Enforce standards and accelerate your release cycles by integrating preventative quality checks directly into your developer workflow. Learn more at https://kluster.ai.