Understanding alpha testing and beta testing in software testing: Quick diffs

Think of alpha testing and beta testing as two completely different, but equally critical, quality checkpoints before your product ever sees the light of day. They aren't interchangeable; they're sequential steps designed to stress-test your software from the inside out.

Alpha testing is your internal proving ground. It’s done in a controlled lab-like setting by your own team—developers, QA engineers, maybe a few brave product managers. The goal here is simple: hunt down the big, ugly, show-stopping bugs before anyone outside your company walls ever sees the code.

Beta testing, on the other hand, is when you release your product into the wild. You hand it over to real users in their own environments—on their janky Wi-Fi, their old laptops, and their weirdly configured operating systems. This is all about gathering feedback on usability, performance, and the overall user experience.

Defining Core Roles in Modern Development

Alpha and beta testing act as essential quality gates, but they’re chasing different dragons. Understanding what each phase brings to the table helps teams build software that’s not just functional, but genuinely user-centric. Alpha testing is really the first line of defense, making sure the product is stable enough to even be considered for external eyes.

Beta testing is where you find out if you’ve actually built something people want to use. It moves past simple functionality and starts asking the tough questions: Is this thing intuitive? Does it solve a real problem for our target audience? The feedback from this phase is pure gold for gauging user satisfaction and market readiness.

Key Objectives for Each Phase

While both phases are about improving quality, their specific missions are miles apart. Breaking them down makes their strategic value crystal clear.

-

Alpha Testing Goals:

- Find and squash critical bugs and showstoppers.

- Validate that all the core features actually work.

- Confirm the system is stable enough to survive in the real world.

-

Beta Testing Goals:

- Get raw, unfiltered feedback on usability and the user journey.

- Discover weird bugs that only appear on specific hardware or network setups.

- Gauge market acceptance and collect ideas for what to build next.

Alpha testing is about building internal confidence that the product works. Beta testing is about building market confidence that the product matters to real people.

This two-pronged approach lets teams methodically de-risk a launch. As you can see, these stages are complementary, not redundant. They are foundational pillars of a solid quality assurance strategy. To go a level deeper, check out our complete guide on software testing best practices.

A Detailed Comparison of Alpha and Beta Testing

While alpha and beta testing both chase the same ultimate goal—better software—they are fundamentally different beasts. Think of it this way: alpha testing is the internal dress rehearsal with the cast and crew, while beta testing is the first preview performance for a hand-picked audience. You absolutely need both, as they uncover completely different kinds of problems at different stages.

The biggest split comes down to control and environment. Alpha testing happens in a sterile, controlled lab setting. It's run by your internal teams—QA engineers, developers, product managers—who simulate user actions. In stark contrast, beta testing is thrown into the wild, unpredictable real world, running on the actual hardware and janky Wi-Fi of external users.

This single difference shapes everything else. Alpha testing is all about hunting for show-stopping bugs, validating that core features actually work, and making sure the build is stable enough to survive outside your company’s walls. Beta testing, on the other hand, shifts the focus entirely to the user experience. It's less about "does it crash?" and more about "is it confusing?" or "is this even useful?"

Participants and Their Perspectives

The people involved in each phase couldn't be more different, and that's by design. Alpha testers are your internal experts. They know the product's architecture, its business goals, and exactly where to poke to make things break. Their feedback is technical, precise, and often tied directly to predefined test cases. They’re brilliant at finding the bugs you expect to find.

Beta testers are the real deal—actual end-users who represent your target market. They have zero inside knowledge, which is their superpower. Their feedback is a raw, unbiased reflection of the true user experience. They're the ones who will discover that your app drains the battery on a three-year-old phone or is impossible to use with a spotty connection. These are the problems your internal team would never think to look for.

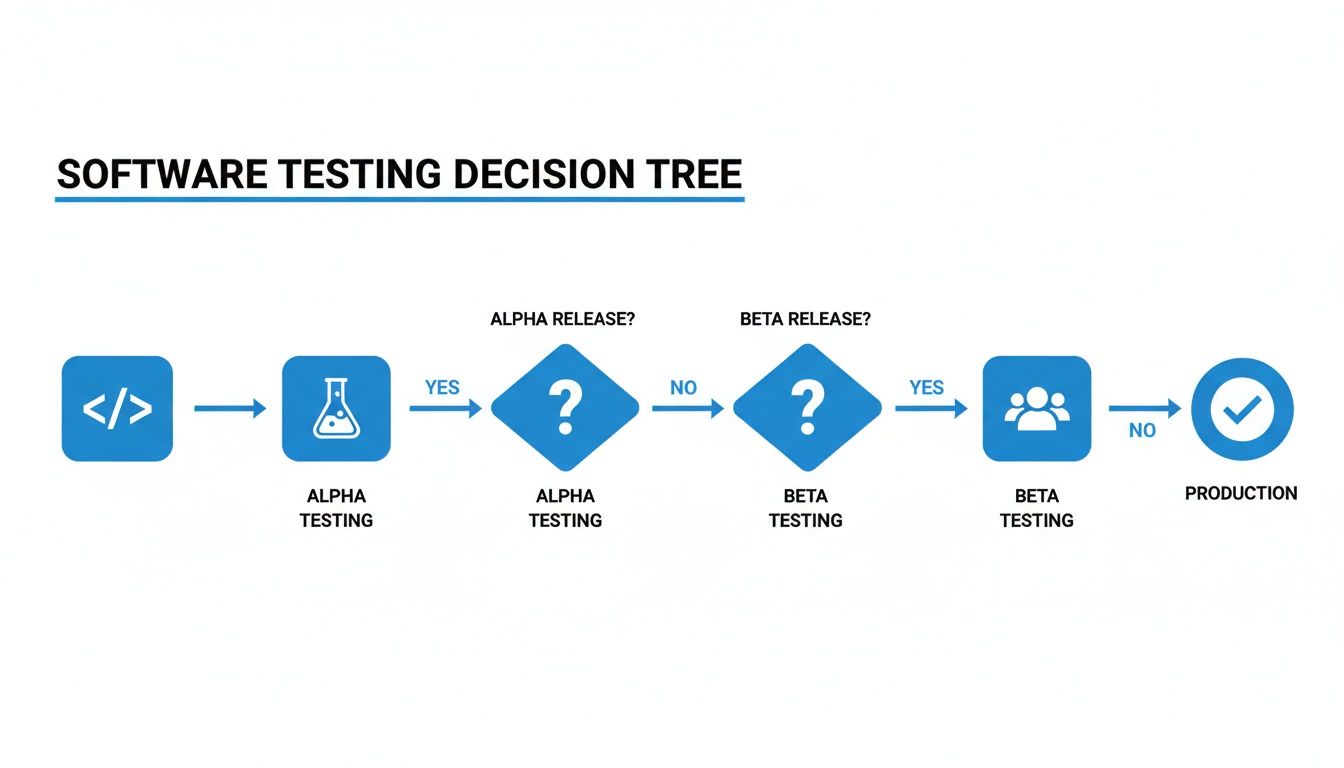

This flowchart clearly illustrates how alpha testing acts as a critical quality gate before a product ever sees the light of day in a beta release.

As you can see, it’s a sequential process. You can't just skip the internal check and hope for the best with real users.

Objectives Scope and Success Metrics

To really nail down how these two stages work together, it's worth exploring the nuances in Alpha vs. Beta Testing: The Two Lenses of Pre-Launch Confidence, which offers a great breakdown of their distinct roles.

The scope of each phase is also sharply different. Alpha testing is exhaustive, often mixing white-box (looking at the code) and black-box (just using the interface) methods to ensure every corner of the system is stable. Beta testing is almost exclusively a black-box activity. Your users don't know or care about the code; they only care about what happens on their screen.

Alpha testing answers the question, "Does the product work?" Beta testing answers the question, "Do people want to use this product?" Both answers are essential for success.

Success, naturally, is measured differently for each.

- Alpha Testing Success: Did we find and fix a high number of critical bugs? Do we have solid test case coverage? Is the system stable?

- Beta Testing Success: Are user satisfaction scores high? Did we get a ton of actionable feedback? Can users complete key tasks easily? Are they coming back to use the app?

A successful alpha phase gives you a product that’s solid enough not to embarrass you. A successful beta phase gives you a product that the market has actually validated, armed with insights you need to nail the official launch.

To make this crystal clear, here’s a side-by-side breakdown of the key differences. This table is a handy reference for quickly grasping the unique job each plays in alpha testing and beta testing in software testing.

Key Differentiators Between Alpha and Beta Testing

| Attribute | Alpha Testing | Beta Testing |

|---|---|---|

| Timing | Right after development wraps, but long before any public release. | Follows a successful alpha phase, happening just before the final launch. |

| Participants | The internal crew: QA, developers, and product managers. | Real, external users who match your target audience profile. |

| Environment | A controlled, simulated lab environment inside the company. | The uncontrolled, messy, real world on users' own devices. |

| Primary Goal | Find and crush critical bugs, stability problems, and showstoppers. | Get feedback on usability, user experience, and real-world value. |

| Testing Approach | A healthy mix of white-box (code-level) and black-box testing. | Almost entirely black-box testing, focused on the user interface. |

| Data Fidelity | All about the technical details: bug reports, system logs, crash data. | Focused on qualitative feedback: user comments, satisfaction scores, usage data. |

Ultimately, alpha and beta testing aren't an either/or choice. They are two essential, complementary stages that pressure-test your product from two completely different angles—technical stability and user desirability. Skipping one is a recipe for a painful launch day.

The Strategic Role of Testing in Global Markets

Launching a product globally isn’t just about translating text. It’s about adapting your entire quality assurance strategy. If you try to apply a cookie-cutter approach to alpha testing and beta testing in software testing, you're going to fail. User expectations, tech infrastructure, and legal rules are wildly different from one region to another. What gets celebrated in a tech-heavy market like North America could completely flop in the mobile-first world of Asia-Pacific.

Strategic testing is all about recognizing these differences and using them to your advantage. It means ditching the standardized checklist and tailoring every single test phase to the specific market you're trying to win. Your beta testing protocols, in particular, have to account for these local nuances to have any shot at a successful international launch.

North America: A Focus on Brand Reputation

In North America, brand reputation is everything and users will drop you in a heartbeat. This means pre-launch testing has to be incredibly strict. The market expects a virtually perfect product on day one, making both alpha and beta testing absolutely critical for protecting your brand. Companies here often run exhaustive closed beta tests with industry influencers and experts to smooth out every last wrinkle.

Europe: Navigating the Regulatory Maze

Europe is a whole different ballgame, dominated by data privacy and security. Regulations like GDPR completely change how you run a beta test. Any testing that involves real users has to put data protection first, which means using anonymized data sets and ultra-secure environments. Finding a security flaw during a beta test in Europe can bring down massive legal and financial penalties.

A global launch strategy is only as strong as its weakest link. Failing to adapt your testing to local regulations and user behaviors is a direct path to market rejection, regardless of your product's quality.

Asia-Pacific: The Mobile-First Frontier

The Asia-Pacific market is defined by its colossal, mobile-first user base and staggering device diversity. Beta testing here isn't about crafting one perfect user experience; it’s about making sure your product performs across a huge spectrum of smartphones, operating systems, and network conditions. If your product isn't optimized for lower-end devices or shaky networks, it will struggle to get any traction.

These regional dynamics are fueling the explosive growth of the global beta testing software market, which is on track to hit USD 3.9 billion by 2035. North America currently commands a 40% share, driven by its obsession with robust pre-launch validation. Europe is next with 30%, where 70% of firms make encryption a top priority during testing.

Meanwhile, Asia-Pacific is the fastest-growing piece of the pie, powered by 2.1 million developers in India, China, and Japan, where 65% of projects are mobile-centric. You can discover more insights about the global beta testing market to dig into the data. The numbers don't lie: tailoring alpha and beta testing to regional realities isn't just a good idea—it's an economic must for anyone serious about global success.

Tying It All Together: Testing in Modern CI/CD and AI Workflows

The days of treating alpha and beta testing as isolated events at the end of a waterfall cycle are long gone. In modern software delivery, these principles are baked directly into the Continuous Integration/Continuous Deployment (CI/CD) pipeline. Testing is no longer a final gate—it's an ongoing, automated process. The goal is to build quality in from the very first line of code.

What this really means is that every single code commit can trigger a series of automated checks that function like a mini-alpha test. These checks verify new features, catch regressions, and ensure the latest code doesn't break the build. By embedding these quality gates right into the pipeline, teams can stay in a constant state of release readiness.

Continuous Alpha Testing, Straight from the IDE

The whole "shift-left" movement is about pushing testing even earlier, right into the developer's Integrated Development Environment (IDE). This is where automated, AI-assisted code review tools come into play, acting as a powerful form of continuous alpha testing. They give developers real-time feedback as they write or generate code.

This screenshot shows exactly what I mean—an AI tool providing instant, contextual feedback inside the IDE, flagging potential issues before they're ever committed. This approach lets developers fix things like AI-generated code hallucinations or security flaws on the spot. You can see how this enhances quality by reading our deep dive on test automation and quality assurance.

By catching these initial problems in the IDE, your dedicated alpha and beta phases can focus on what they're best at: complex integration challenges and true user experience validation. The whole process becomes more streamlined, cutting down review bottlenecks and getting code merged faster.

AI Is a Game-Changer for Feedback Loops

Integrating quality checks directly into the developer's workflow has a massive impact on efficiency. Think about it: traditional testing often eats up 30-50% of development costs and 50% of the timeline. Catching bugs early isn't just nice to have; it's critical.

Tools like kluster.ai provide real-time AI code reviews that act as instant, in-IDE alpha checks. We’ve seen this cut review times in half and ensure AI-generated code actually aligns with the original intent, long before it ever gets near a formal beta. For developers relying on AI assistants, this integration enforces standards, minimizes errors, and speeds up merges—proving just how vital alpha testing and beta testing in software testing are for shipping trusted, production-ready code.

By making testing a continuous activity, teams ensure code is production-ready from the moment it’s written, not after some high-stakes validation phase at the end.

To make this work in rapid release cycles, teams lean heavily on smart workflow management and, you guessed it, more automation. Understanding how to set up these modern environments is key. A practical guide on the finer points of automation in DevOps is invaluable here. This relentless focus on automation is what makes modern alpha and beta testing faster, more reliable, and perfectly suited for the pace of today's development.

Real-World Examples and the Hard ROI of Testing

Abstract ideas about alpha and beta testing don't really click until you see them in action. The strategic value isn't just theory; it translates directly into dodging risks, boosting user engagement, and saving a ton of money. Looking at how different industries handle these testing phases shows a clear, tangible return on investment (ROI).

Take a financial services company building a new mobile banking app. During their alpha testing, they used internal QA teams to simulate various cyberattacks. They found critical encryption flaws that could have exposed sensitive user data. Catching and fixing those issues before a single customer saw the app saved them from potentially millions in regulatory fines and protected their reputation.

Then came the beta program. They gave a select group of external users access, specifically asking them to test the new loan application workflow. The feedback was gold. It highlighted specific points of friction that were causing people to abandon the process midway. After tweaking the UI based on that real-world input, the company saw its loan application completion rates jump by an incredible 40%.

Proving Value When the Stakes Are High

You see the same pattern in other heavily regulated fields, like healthcare. A software provider building an Electronic Medical Records (EMR) system used its alpha testing phase to hunt down and kill critical integration bugs. If those had slipped through, they could have messed up physician workflows and even compromised the integrity of patient data.

Later, during beta testing, a pilot group of doctors and clinical staff used the system in their actual day-to-day work. Their feedback on the prescription management module led to usability improvements that were absolutely crucial for getting people to actually use the new system. The result? A 30% higher adoption rate among clinical staff after the official launch. This proves that both alpha testing and beta testing in software testing are non-negotiable for success.

Investing in pre-launch testing isn't an expense; it's a direct investment in user adoption and risk management. The cost of fixing a bug post-release is exponentially higher than catching it in a controlled alpha or beta environment.

The economic impact is undeniable. Case studies from across the industry consistently show that a well-thought-out testing strategy pays for itself many times over. The global software testing market is expected to rocket from USD 48.17 billion in 2025 to a massive USD 93.94 billion by 2030, which tells you everything you need to know about its critical role in modern development. To get a better sense of the market forces behind this growth, you can dig into these global testing market findings.

Finding and Keeping the Right Testers

The insights you get from alpha and beta testing are only as good as the people you recruit. Botch the selection process, and you'll end up with skewed data and a false sense of confidence. Getting this right is the bedrock of a solid testing program, but the approach for each phase is completely different.

For alpha testing, you're looking inward, pulling together a diverse internal crew. Your QA engineers are the obvious first draft picks, but stopping there is a mistake. Loop in developers and product managers. Developers have a knack for spotting deep architectural flaws, while PMs can instantly tell if the feature deviates from the original business logic or user stories. This mix catches a far wider net of issues than QA can alone.

How to Source and Manage Beta Testers

Once you’re ready for beta testing, the game changes. You need to look outside the company and find people who represent your actual customers. The first step is to build out your target user personas. These aren't just vague descriptions; they're detailed profiles covering demographics, technical comfort levels, and daily habits. Without clear personas, you risk recruiting people who will never actually use your product.

With your personas defined, you can start recruiting through a few key channels:

- Your biggest fans: Reach out to existing customers, especially those who’ve given you thoughtful feedback in the past. They're already invested.

- Your audience: Use your email lists and social media channels to announce the beta program.

- Third-party platforms: If you're starting from scratch, services that connect companies with pre-vetted beta testers can be a lifesaver.

One critical mistake to avoid is geographic bias. A 2015-2016 study of a security product’s beta test found that certain continents were massively overrepresented, while others were barely included. This is a huge red flag—you could be getting feedback that's completely irrelevant to your global user base. You can dig into the research on participant diversity in beta testing to see how to build a more balanced group.

Good management isn't just about bug collection. It's about building a feedback engine that respects testers' time, triages issues like a pro, and communicates progress so people stay engaged.

Finally, you need a system to handle the feedback. Use a dedicated tool to collect, sort, and prioritize everything that comes in. When a tester submits a bug, acknowledge it quickly. Send out regular updates on what you've fixed and what's coming next. This kind of transparency shows your testers that their time is valued, which is the secret to keeping a steady stream of high-quality insights flowing.

Common Questions About Alpha and Beta Testing

When you get down to the brass tacks of alpha and beta testing, a few practical questions always come up. Getting these details right helps teams sharpen their QA strategy and make smarter calls during the entire development cycle.

Can You Ever Skip Alpha or Beta Testing?

You technically can, but it's like playing Russian roulette with your product's reputation.

Skipping alpha testing is basically asking your first real users to be your internal QA team. You're throwing them into the deep end with a product that's likely riddled with critical bugs and instability. That’s a surefire way to damage your brand before you even get off the ground.

On the other hand, skipping beta testing means you're flying blind. You launch without any validation from the people who will actually use your product. You completely miss the chance to get feedback on usability, performance on different devices, and whether your product even solves a real-world problem. For most products, skipping either phase is a non-starter if you want a successful launch.

How Many Testers Do You Need?

The magic number is completely different for each phase. You're solving different problems, so you need different-sized groups.

- Alpha Testing: This is all about a small, focused group. Think 5 to 25 internal experts—your QA engineers, developers, and product managers. This is usually more than enough to sniff out the major technical flaws and glaring functional gaps.

- Beta Testing: Here, you need to go wider. You want a diverse group that truly represents your target audience. The number can range from 50 to 500+ external users, sometimes more, depending on your product's complexity and how big your market is.

What’s the Difference Between Open and Closed Betas?

It really just comes down to accessibility.

A closed beta is an invite-only party for a handpicked group of users. This is perfect when you need controlled, high-quality, and detailed feedback from a specific user profile.

In contrast, an open beta throws the doors open to the public. Anyone can join. This is fantastic for stress-testing your servers and uncovering those weird, rare bugs that only appear at a massive scale.

Ready to eliminate bugs before they even reach QA? kluster.ai provides real-time, in-IDE code reviews to catch AI-generated errors and enforce standards as code is written. Start free or book a demo to accelerate your development cycles.