Optimization of Code: Master Techniques to improve optimization of code speed

Code optimization isn't some dark art practiced by a few wizards in the corner. It's the practical, systematic process of making your software better—faster, leaner, and more reliable—without messing with what it actually does. Think of it as tuning a car engine. The car still gets you from A to B, but a well-tuned engine does it using less fuel, with more power, and with a much lower chance of breaking down.

That’s what we’re doing with our code. We're moving beyond "it works" to "it works brilliantly."

Why Bother With Code Optimization?

Getting code to just work is the first step, not the last. The real engineering challenge is turning that functional code into a rock-solid application that can handle real-world demands. This is where optimization stops being a "nice-to-have" and becomes absolutely critical. It’s the bridge between a proof-of-concept and a production-ready system.

This has become even more urgent with the explosion of AI-assisted coding. The numbers are pretty staggering: by 2025, it's expected that 84% of developers will be using AI coding tools. What's even wilder is that 41% of all new code is already being generated or touched by AI. (You can dig into these stats over at blog.exceeds.ai). This tidal wave of code has created a brand new set of problems.

The Productivity Paradox of AI-Generated Code

We're currently stuck in a weird situation I call the "Productivity Paradox." Our teams can churn out code faster than ever before, but it all comes to a grinding halt at the review stage. Without proper checks and balances, this flood of AI-generated code is riddled with subtle performance bugs, weird logic flaws, and gaping security holes.

We're seeing PR review times balloon by over 90% because senior engineers are stuck playing whack-a-mole, trying to verify code that is almost right. It’s a classic case of more speed, less haste. This paradox proves one thing: just writing more code doesn't lead to better software. You need a disciplined approach to optimization to make sure that speed doesn't tank quality.

True optimization is more than just fixing a slow function here and there. It's about instilling a performance-first mindset that touches every part of the development cycle, from the first sketch on a whiteboard to the final deployment.

This whole process is about building software that lasts. More often than not, the need for frantic optimization comes from letting technical debt pile up. If you want to build for the long haul, you have to learn how to reduce technical debt from the get-go.

The Three Pillars of Code Optimization

When we talk about optimization, we're really chasing three core goals. Each one tackles a different aspect of what makes software great, and together they form the foundation of any solid optimization strategy.

The table below breaks down these core pillars, their main objectives, and how we typically measure success for each.

| Pillar | Primary Goal | Key Metrics |

|---|---|---|

| Performance | Make the application faster. | Latency (ms), Throughput (RPS), FPS |

| Efficiency | Use fewer system resources. | CPU Usage (%), Memory (MB/GB), I/O |

| Reliability | Ensure the application is stable. | Uptime (%), Error Rate (%), MTBF |

Let's quickly unpack what these mean in the real world.

- Performance Enhancement: This is the one everyone thinks of first. It’s all about speed—slashing latency so the UI feels snappy and cranking up throughput so your servers can handle more traffic.

- Resource Reduction: This is about making your code lean. Minimizing CPU, memory, and network usage isn't just about saving money on cloud bills; it's crucial for scalability and making sure your app runs smoothly on a cheap smartphone, not just a high-end gaming PC.

- Enhanced Reliability: Optimized code is predictable code. By stamping out inefficiencies, memory leaks, and race conditions, you build software that doesn't crash, hang, or throw weird errors when things get busy.

Understanding Core Optimization Principles

Jumping into code optimization without a clear strategy is like trying to fix a traffic jam by randomly honking your horn. You might make some noise, but you’re not solving the underlying problem. The best approach to the optimization of code starts with understanding its fundamental principles. It's about building a mental model for performance before you change a single line.

Think of yourself as a chef trying to run a faster kitchen. The answer isn't just chopping vegetables more quickly. It’s about mastering the whole process: knowing your ingredients (data structures), perfecting your techniques (algorithms), and nailing your timing (complexity). This kind of disciplined approach is what separates effective optimization from frantic, counterproductive tinkering.

Measure First, Ask Questions Later

The single most important rule in this game is to measure, don't guess. Our intuition about where performance bottlenecks hide is notoriously bad. We often point fingers at the most complex-looking code, but the real culprit is frequently something far more mundane, like a simple database query or an overlooked loop chewing up resources.

This is where profiling becomes your best friend. A profiler is a tool that watches your application as it runs, collecting hard data on how much time and memory each function is actually using. It gives you a data-backed map that shows you exactly where your code is spending its time. Without this, you’re flying blind.

The first rule of optimization is you do not optimize. The second rule is you do not optimize without data. You must measure first to identify the actual bottlenecks before attempting any changes.

Acting on assumptions leads straight to a classic mistake: premature optimization. This is when you optimize code before you even know if it's necessary, often sacrificing clarity and maintainability for a performance gain that doesn't exist. You end up with convoluted, hard-to-read code that solves a problem you never actually had. The goal here is surgical precision, not a shotgun blast.

Demystifying Algorithmic Complexity

Once a profiler has pointed you to a genuine bottleneck, the next question is why it's so slow. The answer often comes down to algorithmic complexity, which is just a fancy way of describing how an algorithm’s resource usage (like time or memory) scales as the amount of data grows. This is where Big O notation comes in handy.

Don't let the name intimidate you; the concept is actually pretty straightforward. Big O gives us a shared language to talk about the efficiency of different approaches. Think of it like comparing different ways to find a book in a massive library.

-

O(1) - Constant Time: This is like knowing the book's exact shelf and location number. It doesn't matter if the library has 100 books or 10 million; the time to find it is always the same. Accessing an array element by its index is a classic O(1) operation.

-

O(n) - Linear Time: This is like starting at the first shelf and scanning every single book until you find the one you want. The time it takes is directly proportional to the number of books (n). A simple

forloop that touches every item in a list is O(n). -

O(n²) - Quadratic Time: Now imagine for every book you pick up, you have to compare its title to every other book in the entire library. As the number of books grows, the amount of work explodes exponentially. This is the hallmark of nested loops and can bring a system to its knees with even a moderate amount of data.

Getting comfortable with these concepts is crucial. It helps you predict how your code will behave under pressure and choose the right "technique" for the job, ensuring your application stays snappy and efficient as it scales. By pairing hard data from profiling with a solid understanding of complexity, you can make smart decisions that deliver real, impactful performance wins.

Essential Techniques for Optimizing Your Code

Once you've profiled your app and hunted down the real bottlenecks, it's time to get your hands dirty. Optimization of code isn't about finding one silver bullet. It's about having a toolkit and knowing which tool to use for the job.

Great performance rarely happens by accident or through a single, sweeping change. It's usually the result of a series of small, smart improvements that add up to something huge.

Here, we'll get into the high-impact techniques you can actually use to make your code leaner and faster. We'll start with the big wins and work our way through strategies that can give your application a serious performance boost.

Start with Smarter Algorithms

Let’s get this out of the way: the single most powerful optimization you can make is often just picking a better algorithm. Think of an algorithm as a recipe for solving a problem. Some recipes are just fundamentally faster than others, especially when you start cooking for a crowd.

Picking the right one is like choosing a race car over a tricycle for the Indy 500.

Imagine you need to sort a list of one million users.

- A Bubble Sort would shuffle through the list again and again, comparing two users at a time and swapping them. It’s simple to grasp but painfully slow for big datasets, with a time complexity of O(n²).

- A Quicksort, on the other hand, uses a "divide and conquer" approach. It breaks the massive list into smaller chunks, sorts those, and puts them back together. Its average complexity is O(n log n), which is worlds faster at scale.

For a million items, that’s the difference between trillions of operations and just a few million. It's not even close.

The lesson here is simple: No amount of fine-tuning will save a fundamentally broken algorithm. Always check your core logic first.

Choose the Right Data Structure

Just as important as your algorithm is the data structure it works with. A data structure is just how you organize your data, and the wrong choice can bring even the best algorithm to its knees. It’s the difference between searching for a book in a library with a card catalog versus rummaging through a giant, unsorted pile of books.

Let's take a common task: looking up a user by their unique ID.

- Using an Array or List: If your users are stored in a simple list, finding one means you have to scan it from top to bottom. This is an O(n) operation—as your user base grows, so does the search time.

- Using a Hash Map (or Dictionary): A hash map stores data in key-value pairs. It uses a function to instantly turn the user ID (the key) into a memory address. This gives you near-instant retrieval, an O(1) operation. The lookup time stays flat whether you have 100 users or 100 million.

This choice has a direct and massive impact on your code's speed. For instance, knowing how to write cleaner, faster Python with structures like those in Mastering Dictionary Comprehensions in Python is a game-changer. Getting your data structures right is step one for writing high-performance code.

Eliminate Redundant Work with Caching

Caching is a beautiful concept built on a simple idea: if a calculation is expensive and you need the answer more than once, just save it. Don't solve the same hard problem over and over. It's like writing down the answer to a tough math problem so you don't have to re-calculate it every single time.

Think of an e-commerce site displaying its "top 10 best-sellers" on the homepage. Figuring this out might require a slow, complicated database query.

- Without Caching: Every single visitor hitting the homepage forces the server to run that expensive query again. With thousands of users, your database will start to cry.

- With Caching: The first time the query runs, the result gets saved somewhere fast, like in-memory (the cache), for maybe 5 minutes. For the next five minutes, every visitor gets that saved list instantly. The database doesn't even get touched.

This simple trick massively cuts down on server load and makes your app feel incredibly responsive. You can apply caching everywhere, from the CPU level all the way up to content delivery networks (CDNs).

Unlock Parallelism with Concurrency

Most modern computers have multiple processor cores, but by default, your code probably only uses one of them. Concurrency is the art of breaking a big job into smaller, independent pieces that can run at the same time on different cores.

It's like hiring a team of chefs to cook a big dinner. Instead of one person doing everything sequentially, you have one dicing vegetables, another grilling the steak, and a third preparing the dessert—all at once.

Video processing is a perfect example. Encoding a large video file can be split up. Different segments of the video can be processed in parallel on separate threads, and the results are stitched together at the end. This can turn an hours-long process into a matter of minutes.

Concurrency adds some complexity, especially around managing data that multiple threads need to access. But for CPU-heavy tasks, it's one of the most powerful tools you have for squeezing every last drop of performance out of your hardware.

Navigating the Hidden Trade-Offs of Optimization

Chasing after optimized code can feel like a straightforward quest for pure speed, but the reality is a lot messier. Every decision to make your code faster comes with a price, creating a web of hidden trade-offs that every developer has to navigate. The most common one is the constant tug-of-war between raw performance and simple, readable code.

Think about two versions of the same function. One is clean and direct—easy for a new teammate to pick up, and a breeze to debug. The other is a work of art in clever bit-shifting and pointer magic that runs 30% faster. That performance gain is tempting, right? But the second version might be so convoluted that it becomes a maintenance nightmare. A few weeks down the line, a bug pops up, and the team burns hours just trying to figure out what the code is doing, completely wiping out any time saved by the initial speed boost.

The Readability and Maintainability Cost

When you get too aggressive with optimization, you can twist clean, expressive code into an unreadable puzzle. This isn't just about aesthetics; it hits the long-term health of your project hard. Code is read way more often than it's written, and when your team has to stop and decipher complex logic, productivity grinds to a halt.

This is a classic form of technical debt. You gain a few microseconds in execution time, but you might pay for it with hours of developer time later. The trick is to find that sweet spot where the performance gains are big enough to justify the added complexity.

A huge part of smart optimization is knowing when to stop. If an optimization makes the code's purpose unclear, you're probably trading a small, measurable performance win for a huge, unmeasurable maintenance headache.

When Optimization Introduces New Risks

Beyond just making code harder to read, some advanced optimization techniques can sneak in subtle but serious risks. Fiddling with low-level memory management or manual vectorization can bypass the very safety nets built into a language or runtime, potentially opening the door to security holes like buffer overflows.

You really have to consider the potential downsides:

- Increased Development Time: Crafting and verifying complex optimizations isn't quick. It takes deep expertise and a ton of testing, which can seriously stretch your development timelines. Is a 5% speed improvement really worth delaying a critical feature release by two weeks?

- Security Vulnerabilities: Optimizations that involve messing with memory directly or disabling compiler safety checks can accidentally create security holes that are incredibly difficult to spot.

- Reduced Portability: Code that’s been fine-tuned for a specific CPU architecture or operating system might run poorly—or not at all—on different hardware. This can lock you into a particular platform and make future migrations a massive pain.

At the end of the day, the decision to optimize needs to be a deliberate business choice, not just a technical one. It demands a clear-eyed look at the costs versus the benefits. Before you dive into a complicated refactor, always ask: is this performance bottleneck actually hurting our users? And is the proposed fix worth the long-term cost of owning that code? More often than not, keeping things simple is the smartest long-term play.

Integrating Modern Tools for Continuous Optimization

The days of treating optimization of code as a clean-up phase after development are over. That old workflow—write the code, run some benchmarks, then go hunting for bottlenecks with a profiler—is just too slow for how we build software today. The sharpest teams are now shifting left, weaving optimization right into their daily grind.

This isn't about saving performance tuning for the end; it's about treating it as a core part of quality from the very first line of code. The idea is to catch and squash inefficiencies the moment they appear, long before they can pile up into serious technical debt. This is where a new wave of smart tooling is completely changing the game.

The Rise of In-IDE AI Code Review

Instead of waiting for a pull request to get flagged, modern tools give you instant feedback right inside your IDE. Platforms like kluster.ai essentially act as an AI-powered pair programmer, analyzing your code as you write it.

This is way more than just a fancy spell-checker for code. It uses specialized AI agents that actually understand your intent and the surrounding context, flagging potential gotchas in real-time:

- Performance Issues: It can spot when you've chosen an inefficient algorithm, written an unnecessary loop, or used a less-than-ideal data structure, all before you even think about committing.

- Logic Flaws: By grasping what you're trying to accomplish, it can point out where your code is technically correct but logically broken, heading off those subtle, hard-to-find bugs.

- Security Gaps: It actively looks for common vulnerabilities, making sure security is baked in from the start, not bolted on as an afterthought.

This screenshot shows you exactly what that looks like—immediate, actionable feedback from a tool like kluster.ai, right where you're working.

The real magic here is how tight the feedback loop becomes. When you can spot an issue in seconds, you can fix it instantly while the code is still fresh in your mind. This completely sidesteps the painful, time-consuming process of trying to remember why you wrote something a certain way days or weeks later.

Automating Optimization in the CI/CD Pipeline

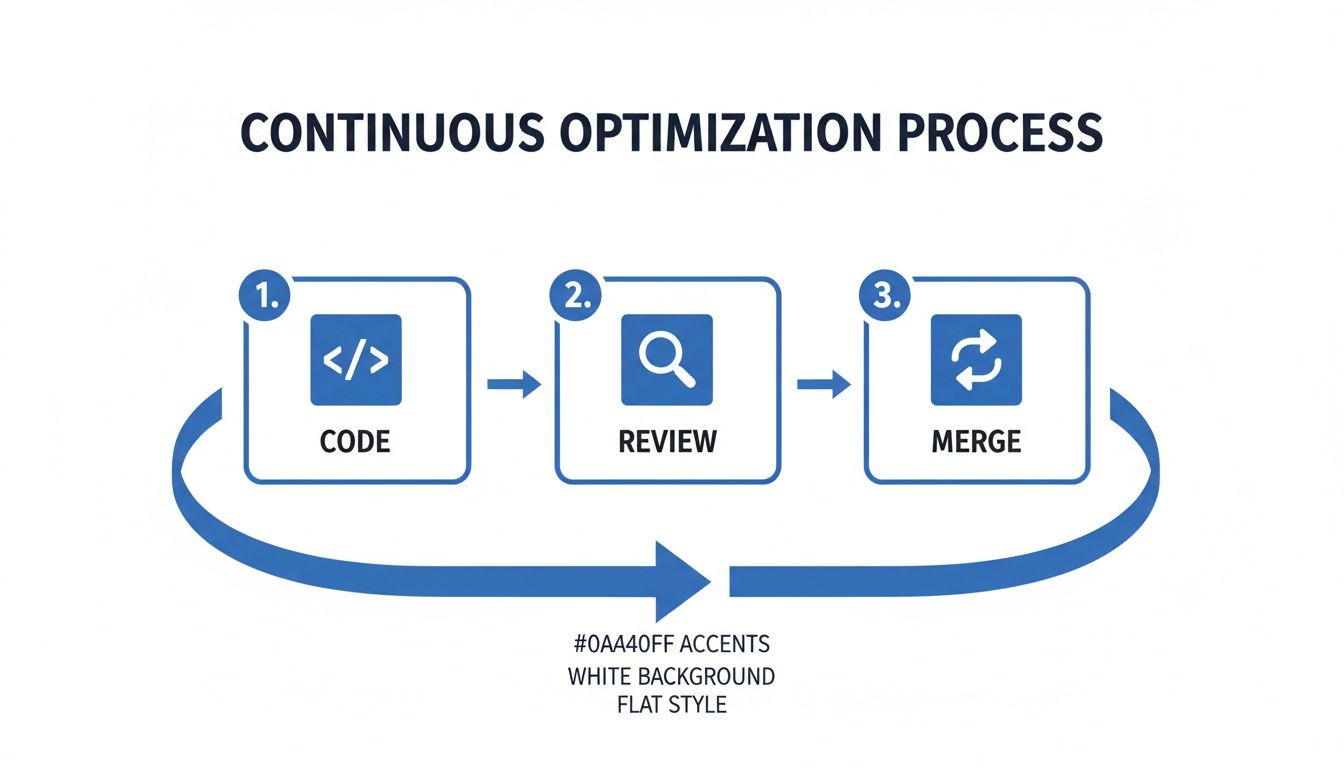

Real-time validation is your first line of defense, but this strategy needs to cover the entire development lifecycle. That's where integrating these automated checks into your Continuous Integration/Continuous Deployment (CI/CD) pipeline comes in.

By setting this up, no new code can be merged until it passes a whole gauntlet of automated checks. It’s a powerful way to enforce performance and quality standards across the board, guaranteeing that every single contribution is performant, secure, and reliable by design. This approach transforms optimization of code from a manual, one-off task into a continuous, automated habit.

This shift is absolutely critical now that AI code generation is becoming the norm. The sheer volume of code being cranked out has skyrocketed, making manual review an impossible bottleneck. In fact, as AI became a dominant force in code generation, monthly merges shot up to 43 million. Teams using AI-assisted tools to manage this flood are slashing review times by 40-60%, seeing 35% higher quality improvements, and merging pull requests in minutes instead of days. You can see how AI is shaking up these workflows in these findings about AI-assisted code reviews on cubic.dev.

By embedding intelligent analysis directly into the developer workflow and CI pipeline, organizations can build a culture of performance. Optimization becomes a shared, continuous responsibility rather than a specialized task for a select few.

This automated, proactive model is the only way to maintain a high-performance codebase at scale. It catches problems early, stops technical debt from piling up, and frees your senior engineers from tedious review cycles so they can focus on bigger, tougher challenges. To see how these systems tick, you might find our guide on the principles behind a dynamic code analyzer insightful. Ultimately, bringing these modern tools into your workflow means you don't have to sacrifice quality for speed.

Your Actionable Code Optimization Checklist

Knowing the theory is one thing, but the real magic happens when you start applying these optimization principles every single day. It's the consistent, small habits that separate the high-performing teams from the ones always putting out fires.

Think of this checklist as a practical framework to weave optimization into your team's DNA at every stage of the development lifecycle. We're not treating performance as an afterthought bolted on at the end; we're making it a core requirement from the very beginning.

This whole process should feel less like a straight line and more like a continuous cycle of improvement.

Each step—coding, reviewing, and merging—feeds back into the others. It creates a powerful feedback loop where solid reviews and smart merging practices make the initial code that much better next time around.

Phase 1: Pre-Implementation Planning

Before anyone writes a single line of code, you have a golden opportunity to set the team up for success. A few hours spent here on clear goals and smart architectural decisions can prevent entire classes of performance headaches down the road. Seriously, it can save you weeks of refactoring later.

- Define Performance Targets: Get specific. Vague goals like "make it fast" are useless. You need clear, measurable targets like "API response time under 100ms" or "reduce memory usage by 20%."

- Choose the Right Tools for the Job: Think about the scale you're building for. Will that algorithm or data structure you've chosen hold up when you have 10x the data? Make that decision now, not when the system is falling over.

- Design for Caching from Day One: Look for caching opportunities early. Figure out what data can be stored temporarily to save yourself from expensive database calls or redundant calculations.

Phase 2: Implementation and Development

This is where the rubber meets the road. During the coding phase, the focus shifts to writing clean, efficient, and—most importantly—measurable code. This is where developers turn plans into reality, making conscious choices that balance raw speed with long-term clarity and maintainability.

The biggest performance wins rarely come from one brilliant trick. They come from hundreds of small, targeted improvements that compound over time. Every single function matters.

- Write Measurable Code: Don't fly blind. Instrument your code with logging and metrics from the get-go. It makes profiling and diagnosing problems infinitely easier once you're live.

- Avoid Premature Optimization: This is a classic trap. Your first priority should be writing clear, correct code. Only dive into complex optimizations after you've profiled and proven that a specific function is a real bottleneck.

- Use Efficient Libraries: Don't reinvent the wheel. For common tasks like data processing or networking, lean on well-tested, highly optimized third-party libraries.

Phase 3: Review and Continuous Improvement

The final phase is all about verification and fostering a sustainable performance culture. With automated checks and rigorous peer reviews, you can ensure quality standards are consistently met and that the codebase stays healthy for the long haul.

- Profile Before and After: Always measure the impact of your changes. Use a profiler to get hard data that confirms your optimization actually worked—and didn't introduce new problems elsewhere.

- Automate Performance Testing: This is your safety net. Integrate automated performance and load tests directly into your CI/CD pipeline to catch regressions before they ever see the light of day in production.

- Conduct Thorough Code Reviews: Make performance a first-class citizen in your review process. Go beyond just code style and encourage real discussions about algorithmic efficiency and resource consumption.

To tie this all together, here’s a practical checklist you can adapt for your team to ensure optimization is a continuous focus, not a one-time task.

Code Optimization Checklist by Development Phase

| Phase | Action Item | Tool/Technique |

|---|---|---|

| Planning | Define specific performance KPIs (e.g., latency <150ms) | Goal Setting, SLO/SLI Definition |

| Planning | Select appropriate algorithms and data structures | Big O Notation Analysis |

| Planning | Architect for caching and asynchronous operations | System Design, Message Queues |

| Implementation | Instrument code with logging and monitoring hooks | OpenTelemetry, Prometheus |

| Implementation | Profile code to identify actual bottlenecks | Profilers (e.g., pprof, JProfiler) |

| Implementation | Write benchmarks for critical code paths | Benchmarking Frameworks (e.g., JMH) |

| Review | Automate performance tests in the CI/CD pipeline | k6, Gatling, CI/CD Integration |

| Review | Use static analysis to catch inefficient patterns | SonarQube, Linters |

| Review | Validate performance in-IDE as code is written | Real-time tools like kluster.ai |

| Review | Make performance a required topic in peer reviews | Code Review Checklists |

By embedding these actions into your routine, performance stops being a special project and becomes just part of how you build great software.

Questions We Hear All The Time About Code Optimization

If you've spent any time tuning performance, you know certain questions pop up again and again. Here are the straight-up answers to a few of the most common ones we hear from developers and managers.

What's the Very First Step?

Measure. Then profile. Period.

Before you even think about changing a single line of code, you have to know exactly where the bottlenecks are. Guessing which parts of your app are slow is a fast track to "premature optimization"—a trap where developers waste hours tweaking code that isn't even a problem. Grab a profiling tool, get real data on execution time and memory use, and then you can make a smart decision.

How Does AI-Generated Code Change the Game?

AI coding assistants are pumping out code at a scale we've never seen before. While this is great for productivity, it's also introducing a flood of subtle performance issues, logic bombs, and security holes.

An AI might get the job done quickly, but it won't always pick the most efficient algorithm or the smartest data structure. That's why having a real-time review tool inside your IDE is no longer a luxury—it's essential. It’s the only way to catch these issues as they're written, ensuring all that extra speed doesn't tank your quality and performance.

As the legendary computer scientist Donald Knuth famously said, "Premature optimization is the root of all evil." He was right. Write clean, correct code first. Only optimize once you have hard data telling you where it hurts.

When Should I Just Leave the Code Alone?

You should back away from optimizing when the payoff is tiny and it makes the code a nightmare to read or maintain later. Your first job is always to write clean, clear, and correct code.

Only dive in when your profiling data points to a specific chunk of code and screams, "I'm the bottleneck!" If the fix doesn't deliver a real, measurable improvement to the user experience or system resources, it just isn't worth the trouble.

Ready to stop performance problems before they even start? kluster.ai plugs right into your IDE, giving you real-time AI code review that catches logic flaws, security risks, and performance drags while you type. Book a demo today and see how you can lock in your team's standards and ship code you can actually trust.