What Is a Nit Code Review and How Do You Eliminate It

A nit code review is feedback that fixates on tiny, often stylistic, issues instead of the real meat of the code—the logic, performance, or architecture. It’s like proofreading a novel and only pointing out typos while completely ignoring a massive plot hole. Sure, you're technically correct, but you're missing the entire point.

The True Cost of Trivial Feedback in Code Reviews

Imagine a master chef meticulously crafting a complex dish. Someone peers over their shoulder and says, "You know, you should really chop those onions into 4-millimeter cubes, not 5-millimeter." The comment is precise, but does it actually make the dish taste any better? Probably not. This is a nitpick in its purest form: noise that adds zero value.

While the intention is usually good, a constant stream of nitpicking creates a ton of friction. It trains developers to sweat the small stuff, killing momentum and burying genuinely critical feedback under a pile of trivial corrections. This cycle doesn't just waste time; it slowly poisons team morale and tanks productivity.

From Minor Annoyance to Major Bottleneck

A single nit is just an annoyance. But a culture of nitpicking? That can bring a development team to a grinding halt. When every single pull request becomes a drawn-out debate over variable names or the proper ordering of imports, the damage starts to spread.

- Slower Velocity: Review cycles balloon from hours to days. Developers get stuck implementing a laundry list of changes that have almost no impact on the final product.

- Reduced Morale: Engineers start to dread submitting code. Who wants to face a firing squad of trivial critiques? It stifles creativity and makes people hesitant to collaborate.

- Lost Focus: Here’s the big one. Reviewers burn their limited time and mental energy policing style guides instead of hunting for serious architectural flaws, security holes, or logic bombs.

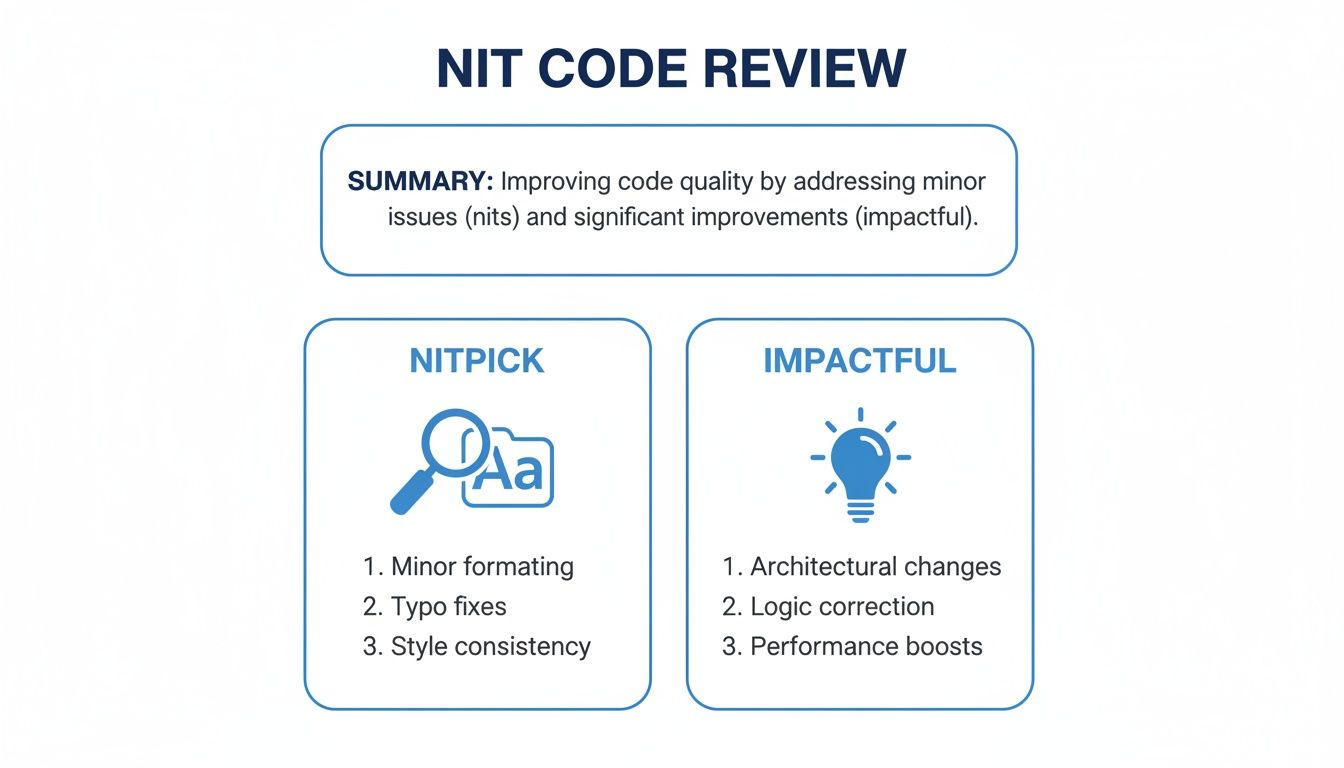

The infographic below draws a clear line between low-impact nits and the high-impact, substantive feedback that actually matters.

Ultimately, the goal is to shift the team's focus from using a magnifying glass on tiny imperfections to shining a spotlight on insights that make the code genuinely better.

To help you tell the difference instantly, here’s a quick breakdown.

Nitpick vs Substantive Feedback at a Glance

| Characteristic | Nitpick (Low-Impact) | Substantive Feedback (High-Impact) |

|---|---|---|

| Focus | Style, formatting, naming conventions. | Logic, architecture, performance, security. |

| Impact | Minimal. Code works the same before and after. | Significant. Prevents bugs, improves scalability. |

| Goal | Enforcing subjective preferences or minor rules. | Improving core functionality and long-term health. |

| Example | "Add a blank line here." | "This query will cause an N+1 problem under load." |

The table makes it clear: one type of feedback is about polishing the surface, while the other is about strengthening the foundation.

"The person with the least amount of tact will say 'this code is terrible'. They’re not calling you a jerk. They’re not saying you’re incompetent. They’re saying your code is suboptimal."

Understanding this distinction is everything. The problem isn't getting feedback; it's getting the right kind of feedback. By automating all the stylistic checks and building a culture that values meaningful review, teams can stop wasting time and start building better software, faster.

Anatomy of a Nit: What Trivial Feedback Looks Like

To get a handle on nit-picking in code reviews, you first need to get really good at spotting nits in the wild. They’re sneaky. These comments often hide in plain sight, masquerading as helpful suggestions, but their defining trait is a total lack of impact on the code's core function.

Think of them as the weeds in the garden of your codebase. They soak up time and attention but offer zero real nourishment.

Bottom line: if a suggested change doesn't fix a bug, boost performance, or patch a security hole, it's almost certainly a nit. Nits are all about the appearance of code, not what it actually does. If you address a nit, you end up with code that runs identically—it just looks different to suit the reviewer's personal taste.

Common Categories of Nitpicks

Spotting nits gets a lot easier once you know their common hiding places. Most of them fall into a few predictable buckets that are more about subjective style than objective improvement.

- Stylistic and Formatting Fixes: These are the usual suspects. We’re talking about endless debates over single vs. double quotes, where curly braces should go, or the "correct" number of blank lines between functions. Consistency is great, but this is exactly what linters and formatters were invented for—let the machines handle it.

- Naming Conventions: Someone might suggest renaming a variable from

user_datatouserData, or maybe tofetchedUser. Unless the original name is genuinely confusing or breaks a clearly documented team standard, this is just personal preference creating noise. - Code Organization: Comments about alphabetizing your imports or shifting a helper function a few lines up or down almost never add real value. They're purely aesthetic changes that just create unnecessary back-and-forth.

Here's a classic nitpick: "Could you rephrase this comment to be more concise?" It sounds helpful, but it doesn't change how the code runs. It just adds another menial task to the author's plate, slowing everything down for a gain so small you can't even measure it.

The Grey Area: Subjective "Best Practices"

The trickiest nits are the ones disguised as "best practices." This is when a reviewer insists on using a map function instead of a for loop, even when both approaches are perfectly readable and performant for the task at hand. It’s a classic case of personal coding philosophy clashing with practical, working code.

Without an agreed-upon style guide that’s enforced automatically, these subjective preferences become a massive source of friction.

A good rule of thumb is to ask yourself one question: "Does this suggestion prevent a potential bug or make the code more resilient?" If the answer is no, you're probably looking at a nit. Substantive feedback is all about the "why"—it explains how a change improves logic or prevents an error, not just how the reviewer would have written it.

Why Nitpicking Happens and How It Harms Your Team

Nitpicking in a nit code review almost never comes from a bad place. Nobody is trying to be a jerk. Instead, it’s usually a symptom of deeper, systemic issues buried in a team's process. Figuring out these root causes is the only way to solve the problem and get everyone focused on feedback that actually moves the needle.

This kind of behavior often starts with good intentions that go sideways. You’ve probably seen it: junior developers, eager to prove their worth, will look for any issue to comment on. Style inconsistencies are the lowest-hanging fruit, so that's what they point out. Without clear guidance on what makes for valuable feedback, they just default to what they can see.

On the other end of the spectrum, you have senior engineers with strong, ingrained preferences built up over years of experience. When those personal tastes aren't locked down in an automated standard, they bubble up as constant, subjective corrections during reviews. This just creates friction and makes the standards feel inconsistent.

The Unspoken Drivers of Trivial Feedback

Beyond individual habits, certain team environments practically encourage nitpicking. The most common culprit? A lack of clear, automated coding standards. When style rules are fuzzy or just live in someone's head, every single review becomes a potential debate over formatting.

This forces engineers to act as "human linters," a mind-numbing job that drains the cognitive energy they should be spending on gnarly architectural problems. Other key drivers include:

- Unclear Review Expectations: If a team hasn't explicitly defined the goal of a code review—to nail down logic, security, and performance—then the door is wide open for any and all feedback, including the trivial stuff.

- A "Find Something" Culture: In some places, reviewers feel this weird pressure to leave a comment, no matter how small, just to prove they’ve looked at the code.

- Lack of Automation: Without tools automatically handling formatting, naming conventions, and other style rules, the entire burden falls on human reviewers at the PR stage.

The core issue here is that human attention is a finite and incredibly valuable resource. Every minute a developer spends debating brace placement is a minute they are not spending analyzing a potential security vulnerability.

The Tangible Damage to Your Team

The fallout from a nitpicking culture goes way beyond just wasted time. These little interactions pile up, inflicting real damage on team dynamics, productivity, and morale. The hits are both immediate and long-term.

First off, it just slows everything down. Development cycles get stretched out as pull requests get bogged down in endless rounds of trivial back-and-forth. This delay directly hurts the team's ability to ship features and respond to what the business needs, creating a massive bottleneck. Understanding how this damages your team is a critical piece of the puzzle when you're trying to improve developer productivity for modern teams.

Even worse, it fosters a culture of fear. When developers know they're about to face a firing squad over minor details, they start getting hesitant to submit their work. This anxiety kills innovation, shuts down collaboration, and leads straight to burnout as engineers feel like their work is constantly under a negative microscope. The review process stops being a collaborative learning opportunity and turns into a stressful, adversarial ritual.

How AI Tools Are Making Nitpicking Obsolete

The best way to get rid of the friction from a nit code review is to stop nits from ever showing up in a pull request in the first place. Old-school linters and auto-formatters are a decent start, but they usually run way too late in the game—after the code's already been written. This is where modern AI tools are completely changing the landscape.

Instead of making you wait for a CI/CD pipeline or a pre-commit hook to tell you something's wrong, advanced AI tools plug right into your IDE. Think of them as a real-time, private coding partner that gives instant feedback on everything from style guides to variable names while you type. This immediate feedback loop keeps developers in a state of flow and bakes compliance in from the very first line.

This proactive approach moves the job of enforcing standards from humans to machines, which is exactly where it ought to be. It makes consistent style an automatic part of writing code, not a painful chore during the review.

The Move from Post-Mortem to Real-Time Review

The real magic of in-IDE AI assistants is that they offer a private, zero-judgment feedback channel. A developer can try things out, make mistakes, and fix them on the spot without ever having to show minor stylistic blunders to the team. It's a subtle change, but it has some powerful benefits:

- Takes the Burden Off Reviewers: It frees up human reviewers from the mind-numbing task of being "human linters." This lets them focus their brainpower on what actually matters—architecture, business logic, and impact.

- Lowers Author Anxiety: Developers can open PRs with confidence, knowing all the little style and formatting rules are already handled. This takes away a lot of the psychological friction of asking for a review.

- Speeds Up Cycle Times: By catching and fixing nits before the code is even committed, that endless back-and-forth that drags down so many reviews just disappears. PRs are cleaner, more focused, and get merged a whole lot faster.

When you catch nits in the editor, AI tools transform code review from a process of correction into a conversation about improvement. The focus shifts from "how the code looks" to "how the code works."

The market is catching on to this value fast. In 2023, the global market for AI-powered code review tools hit USD 907.0 million. That number points to a massive industry shift toward automation, one that's projected to soar to USD 4,936.4 million by 2030 as teams scramble to keep up with the sheer volume of AI-assisted code generation.

It’s Not Just About Style Anymore

The newest wave of AI tools goes way beyond just fixing your formatting. Platforms that integrate directly into your development environment can enforce complex, company-specific rules that a standard linter could never dream of handling. For instance, tools like Parakeet AI are designed to provide this kind of deeper, context-aware analysis.

These tools build a powerful layer of governance right into the workflow. They ensure every line of code—whether written by a human or an AI assistant—meets the team’s highest standards for security, performance, and maintainability. This takes automation from being a simple convenience to a core strategic advantage for building great software at scale. For a deeper look, check out our guide on how an AI code review tool can be put into practice effectively.

Practical Guidelines for Better Human Code Reviews

When automated tools start handling the low-level noise, human reviewers can finally get back to what they do best: having high-impact conversations that build great software. This is a big shift. We move from a “find the flaws” mindset to a "collaborative improvement" one. The whole point is to make code reviews a valuable learning opportunity, not an adversarial gauntlet.

Getting there means building new habits for both reviewers and authors. It’s all about bringing a bit of structure and intentionality to the conversation, making sure feedback is clear, constructive, and focused on what truly matters.

Best Practices for Reviewers

As a reviewer, your job is to improve the code, not just poke holes in it. Your feedback needs to be thoughtful and easy to digest, which means thinking carefully about how and when you deliver it.

- Batch Your Comments: Don't fire off comments one by one as you find things. Review the entire pull request, gather your thoughts, and submit your feedback in a single, consolidated pass. This avoids the constant stream of notifications that can feel overwhelming and disruptive for the author.

- Prefix Minor Suggestions: If you spot a tiny issue that doesn't block the merge but could be a nice improvement, prefix your comment with a label like [nit] or [optional]. This immediately signals to the author that the change is low-priority, giving them the choice to address it now or file it for later.

- Always Explain the ‘Why’: Never just say "change this." A comment without context is a command, not a conversation. Explain the reasoning behind your suggestion—connect it to a principle like performance, readability, or future maintenance. This turns a simple correction into a powerful teaching moment.

The goal is to have a conversation about the code, not deliver a verdict. An effective reviewer knows their comments are just the start of a dialogue aimed at a better solution.

Best Practices for Authors

As the author, your job is two-fold: guide the reviewer toward a productive discussion and be receptive to constructive feedback. A well-prepared pull request is the first step.

- Provide Clear Context: Your PR description is your best friend. Explain what the code does, why the change is necessary, and—most importantly—what specific areas you'd like feedback on. This helps focus the reviewer's attention where it’s most needed and avoids wasted effort.

- Receive Criticism Gracefully: It’s crucial to separate your identity from your code. When a reviewer points out a flaw, it’s a comment on the work, not on you as a person. Assume they have good intentions and approach the feedback with curiosity rather than defensiveness.

- Know When to Push Back: Not all feedback needs to be accepted. If a suggestion is purely subjective or you have a strong, data-backed reason for your original implementation, it's perfectly fine to explain your rationale and stand your ground respectfully.

By adopting these habits, teams can foster a review process that’s more positive, efficient, and genuinely effective. If you want to dig deeper into this, check out our guide on establishing the best practice code review workflow.

Building a Culture That Prioritizes Impactful Feedback

Ultimately, getting rid of the friction from nit code reviews for good takes more than just smart tools—it demands a real cultural shift. Engineering leaders have to champion an environment where substantive, impactful feedback is the norm, not the exception. This is the final piece of the puzzle, combining powerful automation with intentional team practices to create a healthier, more efficient development lifecycle.

The foundation for this culture is a single source of truth for style. When you establish a clear, comprehensive style guide and enforce it automatically with linters and in-IDE AI tools, you pull all the ambiguity out of the process. This one move takes stylistic debates completely off the table, signaling to everyone that that kind of feedback just isn't a valuable contribution during a human review anymore.

Fostering a Collaborative Mindset

With machines handling the nits, your team can finally focus on what humans do best: thinking critically about architecture, logic, and potential blind spots. To nudge this behavior in the right direction, you can put a few key practices in place that reinforce the value of high-impact feedback.

-

Use Smart PR Templates: Create pull request templates that prompt authors to explain the "why" behind their changes. Ask them to call out specific complex areas where they want a second set of eyes. This focuses reviewers on the big picture from the get-go.

-

Celebrate Great Feedback: When a reviewer leaves a comment that's genuinely insightful—maybe it catches a major bug or suggests a brilliant architectural tweak—celebrate it. Give them a shout-out in a team chat or meeting. This shows everyone what excellent, high-value feedback actually looks like.

A culture of impactful review transforms the process from a contest to find flaws into a collaborative effort to improve the product. The goal becomes building better software together, not just proving who is right.

When you combine automation that filters out the noise with cultural practices that reward meaningful insight, you create a powerful feedback loop. Code quality goes up, review cycles get faster, and developers are more engaged and less stressed. This holistic approach ensures your team spends its most valuable resource—human attention—on the challenges that truly matter.

Got Questions About Nits? We've Got Answers.

Navigating the world of nit code reviews can feel a bit fuzzy at first. As teams start to get serious about cutting down on review noise, a few common questions always seem to surface. Here are some straightforward answers to the tricky ones.

What If a Nit Is Technically Correct?

This is the classic dilemma. Someone points out a minor style issue that, according to the official guide, is technically right. But is it really worth the back-and-forth? Is it worth the reviewer’s time to type it and the author's time to context-switch and fix it?

If you’re stuck doing this manually, the best compromise is to have reviewers prefix these comments with [nit]. This signals that the comment is non-blocking and respects the author's time, while still flagging the minor point for awareness.

But let's be honest, that’s just a workaround. The real solution is to automate it. If a rule is important enough to mention, it’s important enough to be handled by a linter or an in-IDE AI tool long before a human ever has to see it.

How Do We Handle Senior Engineers Who Are Set in Their Ways?

Getting buy-in for cultural change is tough, especially with seasoned developers who’ve been doing things a certain way for years. Their habits are deeply ingrained, and for good reason—they’ve worked.

The key here is to reframe the conversation. This isn't about one person's opinion versus another's; it's about the team's overall efficiency.

Focus the conversation on team velocity, cognitive load, and the strategic value of freeing up senior talent for complex architectural problems. When you automate style enforcement, it's no longer one person's opinion against another's; it's just the agreed-upon standard.

This approach takes the personal element out of the equation. It makes the process objective and turns what could be resistance into a shared goal: spending less time on trivial matters and more time on the hard problems only they can solve.

Stop wasting time on manual nitpicking and focus on what matters. kluster.ai integrates into your IDE to catch stylistic issues, enforce standards, and verify AI-generated code in real-time. Start free or book a demo to see how you can merge cleaner PRs faster.