A Complete Guide to AI Powered Code Review

AI-powered code review is the next step for modern development, moving quality checks out of delayed pull requests and into the developer's editor for real-time feedback. It uses intelligent assistants to automatically catch errors, enforce standards, and secure code as it's being written.

The Inevitable Shift to AI-Powered Code Review

For most development teams, the traditional pull request (PR) process is broken. It’s a painful cycle of waiting for feedback, disruptive context switching, and debating subjective opinions that grinds the delivery pipeline to a halt. This manual, asynchronous approach just isn't sustainable anymore, especially with the explosion of AI-generated code.

Think of it this way: the old PR workflow is like proofreading a novel one page at a time after the author has already finished the entire book. The editor sends back notes, the author rewrites, and the cycle repeats. An ai powered code review tool, on the other hand, is like having an expert editor providing instant feedback as you type each sentence. It acts as an intelligent assistant, working right inside your Integrated Development Environment (IDE).

A Necessary Evolution for Modern Teams

This shift isn't just about making an old process a little better; it's a fundamental change required to keep pace with how fast we build software now. As developers write and generate more code than ever, the bottleneck of human review becomes painfully obvious. Waiting hours—or even days—for feedback on a few lines of code just doesn't work for teams that need to ship features quickly.

The core issues with the old PR workflow are impossible to ignore:

- Significant Delays: Developers push code and just… wait. They lose momentum and focus while their work sits in a queue.

- Costly Context Switching: By the time feedback finally arrives, the developer has already moved on. They have to painfully reload the original context, killing their productivity.

- Inconsistent Standards: Human reviews are subjective. What one person flags, another might ignore, leading to inconsistent code quality.

- Security Gaps: Manual reviews often miss subtle but critical security vulnerabilities that automated systems are specifically trained to find.

The goal is to transform code review from a frustrating gatekeeping process into a collaborative, real-time coaching experience. This allows developers to merge production-ready code just minutes after writing it, not days later.

This inevitable shift is part of a larger trend in modern software development, often called AI engineering. By embedding intelligent analysis directly into the developer’s workflow, teams can automate the tedious, repetitive parts of quality control. This frees up senior engineers from doing mundane checks, letting them focus on complex architectural decisions and mentoring. AI review ensures every line of code—whether written by a human or an AI—is secure, efficient, and compliant from the moment it’s created.

How AI Is Changing The Game For Code Quality and Security

Modern AI powered code review is worlds apart from a traditional linter or syntax checker. We're not just talking about catching a missing semicolon anymore. This is about a deep, sophisticated analysis of your code’s logic, security, and performance—all happening in real time as you type. These tools are complex systems, blending the sharp pattern-recognition of static analysis (SAST) with the deep contextual understanding of Large Language Models (LLMs).

This combination gives them an almost uncanny awareness of your project. Think of it like having an expert co-pilot who has not only read every line of code in your repository but has also memorized its entire history, absorbed all the documentation, and somehow even grasps your original intent for a change. That's the level of insight we're talking about.

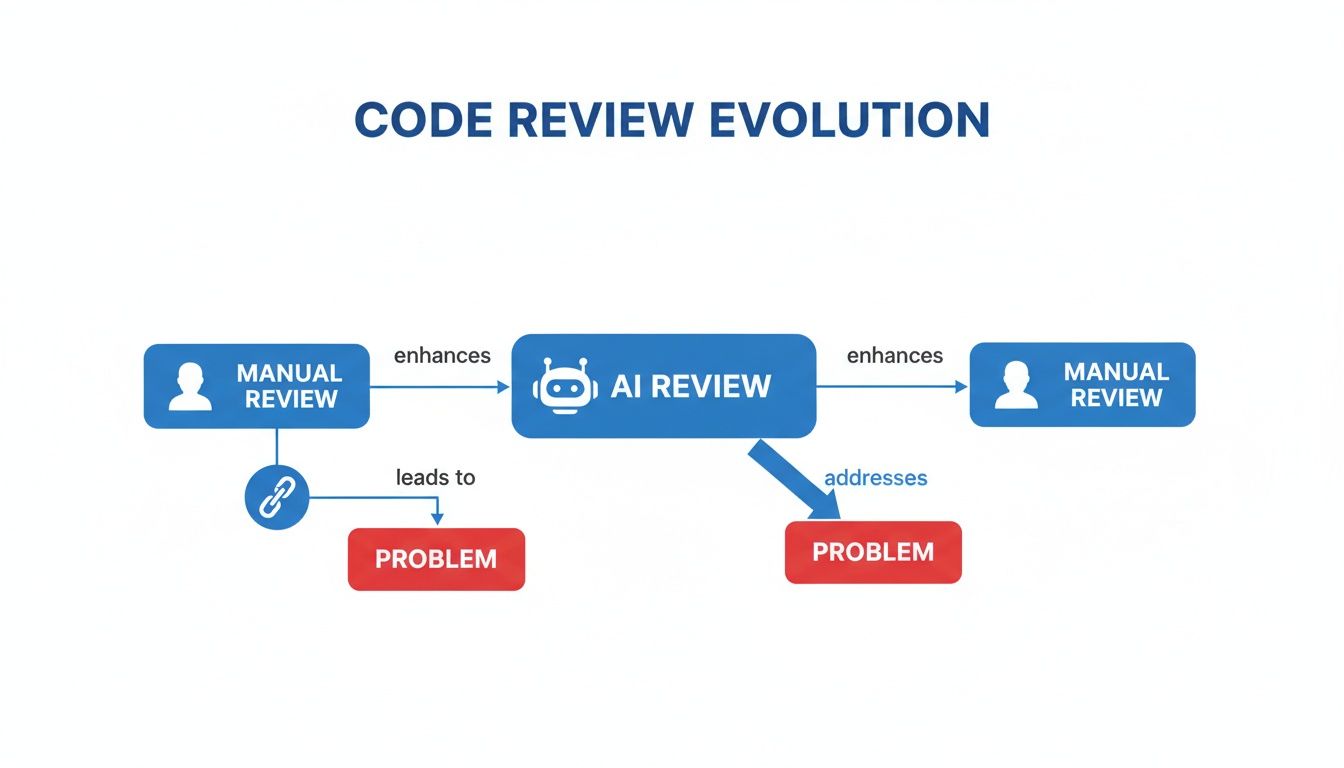

This diagram shows how we got from slow, manual reviews to this new world of intelligent, AI-driven analysis.

As you can see, AI isn't just an add-on; it steps in to fix the very things—like delays and human error—that break traditional review workflows.

Moving Beyond Generic AI Assistants

The secret sauce here is something we call an "intent engine." Plenty of generic AI coding assistants can spit out code, but they're often flying blind. They lack the project-specific context to know if that code is correct, secure, or even consistent with your existing patterns. They work in a vacuum, completely unaware of your team’s unique standards or the subtle dependencies woven throughout your codebase.

A true AI powered code review platform, on the other hand, tracks everything. It learns from your repository’s history, monitors the prompts you feed it, and maintains context from one interaction to the next. This lets it deliver incredibly relevant suggestions that a generic tool would completely miss.

By understanding what you intended to build, the AI can more accurately identify when the code deviates from that goal. It’s the difference between a tool that just checks for errors and a partner that helps you write better code.

For example, ask a generic AI to write a function for user data retrieval, and you'll probably get a standard SQL query. A context-aware system, however, would know your project uses a specific ORM, follows a certain data access pattern, and has strict PII handling policies—and it would tailor the suggestion to fit perfectly.

Specialized Agents For Targeted Analysis

Instead of a one-size-fits-all model, the best systems deploy a team of specialized agents, each trained to hunt for specific kinds of issues. It's like having a squad of experts—a security specialist, a performance engineer, and a master logician—all reviewing your code at the same time.

These agents work together to paint a complete picture:

- Security Agents: Think of these as your digital guardians. They’re trained to spot common vulnerabilities like SQL injection, cross-site scripting (XSS), and improper authentication before the code is ever committed. They can catch insecure dependencies or weak cryptographic practices that a human reviewer might easily overlook.

- Performance Agents: This agent is your profiler, sniffing out inefficiencies that could grind your application to a halt. It might flag a database query that would cause bottlenecks at scale, suggest a more memory-efficient algorithm, or identify redundant calculations in a tight loop.

- Logic and Quality Agents: These agents focus on correctness and maintainability. They catch flawed business logic, identify potential null pointer exceptions, and enforce consistent coding standards across the project. This is what keeps a codebase clean, readable, and easy for the next developer to pick up.

By handing these specific jobs to dedicated AI agents, the entire review process becomes incredibly robust. This technical foundation allows development teams to catch a much wider range of bugs and vulnerabilities, shifting quality and security checks all the way to the left—right inside the developer’s editor.

Manual PR Review vs Real-Time AI Powered Code Review

The difference between a traditional, manual pull request workflow and a real-time, AI-driven one is stark. The old way is slow, reactive, and prone to human error. The new way is instant, proactive, and built for the speed of modern development.

Here’s a direct comparison that breaks it down:

| Aspect | Manual PR Review | AI Powered Code Review (In-IDE) |

|---|---|---|

| Workflow Timing | Post-development, asynchronous | Real-time, as code is written |

| Feedback Speed | Hours or days | Milliseconds to seconds |

| Focus | Error detection after the fact | Proactive error prevention |

| Context | Limited to the PR diff | Full project history, documentation, and developer intent |

| Bottlenecks | Creates significant delays and context switching | Eliminates review bottlenecks entirely |

| Consistency | Varies by reviewer; prone to human error and fatigue | Enforces standards consistently and tirelessly |

| Scope | Catches what humans see | Catches complex logic, security, and performance issues |

This table makes it clear: we're moving from a system that inspects a finished product to one that guides its creation. AI-powered review isn't just a faster version of the old way—it’s a fundamental shift in how we build high-quality, secure software from the very first line of code.

What You Actually Gain with Real-Time AI Review

Moving AI-powered code review into your IDE isn't just a small step up—it's a fundamental change in how your team writes, ships, and secures code. We're not talking about theoretical gains; these are real, measurable improvements that impact developers, managers, and security folks every single day.

This isn't just a niche idea anymore. The market for these tools hit an incredible USD 907.0 million in 2023. It's a clear signal that teams are ditching the slow, manual pull request process for instant AI feedback. That number is projected to explode to USD 4,936.4 million by 2030, which you can read about in the full market analysis.

For Developers: Freedom from PR Ping-Pong

Every developer knows the pain of "PR ping-pong." You write the code, submit a pull request, and then wait. And wait. Eventually, comments roll in, you make revisions, push again, and the cycle repeats, sometimes for days. Each round is a massive context switch, yanking you out of your flow to revisit code you wrote hours or days ago.

With real-time AI review, that entire frustrating process disappears. Feedback is instant. The moment you type a potential bug, a style violation, or a security risk, it gets flagged right there in your editor.

This lets you fix problems while the logic is still fresh in your head. The result? The code you write is ready for production from the get-go. Merges can happen in minutes, not days, turning the review process from a bottleneck into a helpful partner that works alongside you.

For Engineering Managers: Automated Guardrails and Consistency

If you're an engineering manager, your job is to keep quality, security, and consistency high. That's tough enough, but it gets exponentially harder as your team grows and AI-generated code starts flooding the repository. There's simply no way manual reviews can catch everything across 100% of contributions, which leads to standards being enforced unevenly.

AI-powered review is like having an automated governance engine that never sleeps. It checks every single line of code—whether from a junior dev or an AI assistant—against your team's specific coding standards, naming conventions, and compliance rules.

This gives you a level of assurance that was impossible before. Managers can finally trust that every commit meets the established guardrails without having to personally inspect thousands of lines of code. It frees you up to focus on the big picture, like architecture and mentoring your team.

This is especially critical for managing AI-generated code, which is notorious for introducing subtle bugs and style inconsistencies. An AI reviewer makes sure even the code written by another AI meets your quality bar.

For DevSecOps: True "Shift-Left" Security

Everyone talks about "shifting left," but what does it really mean? The goal is to catch security issues as early as possible. Real-time AI review is the ultimate version of this. Instead of finding a vulnerability in the CI/CD pipeline or a security scan days after the code was written, you catch it before it ever leaves the developer's machine.

Think about flagging critical security flaws the instant they're typed:

- SQL Injection: The AI spots an unsanitized input that could have exposed your entire database.

- Improper Authentication: It identifies a logic error that might let an attacker bypass your login.

- Leaked Secrets: The tool catches a hardcoded API key before it's ever committed to the repository.

Finding these issues at the source is massively cheaper and faster than fixing them once they're merged and deployed. It turns security from a reactive gatekeeper into a proactive part of how everyone writes code. If you're curious about the technical challenges involved, we wrote a whole piece on why real-time AI code review is harder than you think.

Grappling with AI's Limits and Risks

Look, an ai powered code review tool can absolutely supercharge your development cycle, but it's no silver bullet. Like any powerful technology, it has real limitations and risks you need to get your head around. If you don't, you'll end up with a false sense of security and a whole new class of bugs.

The biggest boogeyman everyone talks about is AI hallucinations. This is when a model confidently spits out a suggestion that's just plain wrong—factually incorrect, logically broken, or totally irrelevant. Imagine an AI suggesting a "fix" that silently injects a critical security flaw. That’s the kind of scenario that keeps engineering leads awake at night.

Hallucinations are a feature, not a bug, of how Large Language Models work. They're built on probability, identifying patterns in data, not on genuine understanding. This means they can generate code that looks perfect but completely fails to do what it's supposed to.

The Problem of Crying Wolf

Right alongside hallucinations, you have the classic problems of false positives and false negatives. These aren't just small annoyances; they can completely wreck your team's trust in the tool and end up creating more work than they save.

- False Positives: The AI flags perfectly good code as being a problem. After enough of these "boy who cried wolf" alerts, developers just start ignoring the tool altogether.

- False Negatives: This is the much scarier one. The AI completely misses a real bug or a gaping security hole, giving everyone a false sense of confidence that the code is solid when it’s not.

The real danger of an unchecked AI review system isn't the bad code it suggests, but the critical issues it fails to see. This is exactly why a human expert still needs to be in the driver's seat.

This is where the more sophisticated platforms are pulling ahead. They go beyond simple pattern-matching by building an "intent engine." This engine constantly checks the AI's suggestions against what the developer actually asked for and the project's history. By asking, "Does this suggestion actually solve the problem the developer described?" these systems filter out a huge chunk of hallucinations and false alarms before a human ever sees them.

Keeping the Human in the Loop

At the end of the day, AI review is a tool to augment your developers, not replace them. Its job is to handle the tedious 80% of routine checks so your senior engineers can focus on the complex 20%—the tough architectural calls, the nuanced business logic, and mentoring the junior devs.

The best workflow treats the AI like a hyper-diligent junior engineer who prepares the first draft of a review. The AI does the heavy lifting, but the developer always has the final say, using their deep domain knowledge and critical thinking to approve or reject the suggestions.

Of course, beyond the code itself, you have to think about legal compliance when bringing in any AI tool. For a good breakdown of the rules, check out this practical AI GDPR compliance guide. By adopting this collaborative model, your team can get a massive boost in speed without giving up the quality and security that only a human expert can truly guarantee.

Implementing AI Powered Code Review in Your Workflow

Rolling out an AI powered code review tool isn't something you just flip a switch on. It's a process, one that requires a smart, phased approach to make sure it actually sticks and delivers real value. The idea is to move from theory to practice, proving its worth in your specific environment to build momentum for a company-wide adoption.

And the best way to do that is to start small.

Forget about deploying it to the entire engineering org at once. That's a recipe for chaos. Instead, pick a single, innovative team for a pilot project. You want a group that’s open to trying new things and working on a project that’s typical of the kind of work you do. This gives you a controlled sandbox to learn, adapt, and gather feedback without blowing up everyone else's workflow.

The explosive growth in this space tells you there’s a real need here. The global code review market hit USD 784.5 million in 2021 and is on track to reach USD 1,765.2 million by 2033. This isn't just hype; it's a direct response to the pain of manual reviews, which are notorious for missing bugs and security holes. You can get a closer look in this market report on code review tools.

Establish Clear Success Metrics Upfront

Before you even install the tool, you need to know what a "win" looks like. Vague goals like "improving code quality" won't cut it. You need hard, measurable Key Performance Indicators (KPIs) to track the tool’s impact. This data is your ammunition when you need to make the case for a broader rollout.

Think about metrics that solve your team's biggest headaches:

- Reduction in PR Review Time: How long does it take for a pull request to go from opened to merged? With instant feedback, developers submit cleaner code, and this number should drop significantly.

- Decrease in Bugs Reaching Production: Track how many bugs QA or your users report. A good implementation catches more of these issues before they ever leave the developer’s editor.

- Improved Developer Satisfaction: A simple survey can tell you a lot. Less friction and less waiting should make for happier, more productive developers.

- Increased Merge Frequency: How many PRs does a developer merge per week? Faster, more reliable reviews directly translate to higher development velocity.

Configure and Customize for Your Standards

An AI tool right out of the box is a good start, but its real power is unlocked through customization. You have to configure the system to enforce your team's specific coding standards, security policies, and architectural patterns. This step turns a generic checker into a digital teammate that actually understands "how we do things here."

The tool should learn and enforce your unique codebase conventions, not force you to adopt its defaults. This alignment is critical for maintaining consistency and ensuring the AI's suggestions are genuinely helpful.

Most modern platforms let you build custom rulesets. You can teach the AI to flag deprecated functions, enforce specific naming conventions, or make sure every new database query follows your performance best practices. This ensures every developer, from junior to senior, is writing code that fits within your team's established guardrails.

Embrace the Feedback Loop

Finally, a successful rollout depends on treating the AI as a learning system. Every single interaction—every accepted suggestion, every ignored fix, every manual correction—is a training signal. This feedback loop is what makes the AI smarter and more dialed into your project’s unique context over time.

Encourage your pilot team to actively engage with the tool. When the AI gets something wrong, correcting it helps refine its model for the next time. This process of continuous improvement ensures the AI powered code review system evolves right alongside your codebase, becoming an increasingly accurate and valuable partner in your workflow. It also gives managers a clear, data-backed plan to take the tool organization-wide.

How to Choose the Right AI Code Review Solution

Picking the right AI powered code review solution isn't just about ticking boxes on a feature list. It's about finding a partner that slides right into your team's existing workflow without causing a bunch of friction. The market for the AI coding assistants driving this shift is set to explode—from USD 8.14 billion in 2025 to a staggering USD 127.05 billion by 2032, according to one market analysis. With that kind of growth, you're going to be swimming in options.

But not all of them are created equal. The single most important difference is where the review happens. If a tool only flags issues in a pull request, you’re just putting a slightly faster engine in the same old broken car. To really kill the context switching and stop the endless waiting, you need a solution that gives developers feedback right inside their IDE.

Key Evaluation Criteria for Your Team

When you start looking at different tools, zero in on the features that will solve your team’s biggest headaches. A great solution should feel less like a nagging linter and more like an expert co-pilot sitting right there with you.

Here's what you should be looking for:

- Deep IDE Integration: Does the tool actually live inside your team's preferred editors, like VS Code or Cursor? If your developers have to leave their environment just to see feedback, you've already lost the main benefit.

- Real-Time vs. Asynchronous Feedback: Does it analyze code instantly, as it's being written, or only after the fact? The real magic happens when you get immediate feedback that prevents mistakes from ever being committed.

- Full Contextual Awareness: A smart tool needs to understand more than just the few lines of code you typed. It has to ingest your entire repository, internal wikis, and even figure out the developer's original intent to give suggestions that are actually helpful.

- Specialized Analysis Agents: Does the system use different agents for different problems? A one-size-fits-all model will miss things. You want to see specific capabilities for security vulnerabilities, performance bottlenecks, and tricky logic errors.

- Customizable Rules and Guardrails: Can you actually teach the tool your team’s unique coding standards, naming conventions, and security policies? That's what turns a generic checker into an essential part of your team.

Choosing a solution without deep contextual understanding is like hiring an editor who has never read the first half of your book. They might catch typos, but they’ll miss the plot holes completely.

Connect these features directly to your team's specific problems—whether it's PRs that take forever, inconsistent code quality, or security blind spots. The goal isn't just to find a tool that reviews code, but one that helps your team write better, safer code from the jump.

To get a head start on your search, take a look at our guide on the top AI code review tools on the market today.

Got Questions About AI-Powered Code Review? We've Got Answers.

As engineering teams start looking at tools like ai powered code review, a few practical questions always pop up. It's one thing to talk about the high-level benefits, but it's another to understand how this stuff actually works day-to-day. Let's get into the most common concerns we hear from developers.

Can AI Really Understand Our Unique Business Logic?

This is usually the first question, and it's a great one. How can an AI possibly get the nuances of your specific domain? A generic model looking at a snippet of code in a vacuum has no chance. It might flag a bit of code as "inefficient" without realizing that inefficiency is a required step in a critical financial calculation unique to your business.

The answer is deep, repository-wide context. The best AI review tools don't just see the code you just wrote. They ingest your entire codebase, your internal wikis, and even the prompts you use with your coding assistant. This lets the AI build a rich map of your project's world. It learns your team's specific patterns, architectural choices, and business rules, which is how it can give you feedback that's actually helpful instead of just generic noise.

Is This Going to Replace Developers?

Let's clear this up right away: absolutely not. The point of AI review isn't to replace developers but to make them better and faster. Think of it as the ultimate pair programmer—an assistant that handles the grunt work so you can focus on the hard stuff.

AI review automates the first pass, catching 80% of the common issues like style mistakes, potential bugs, and obvious security risks. This frees up your senior devs to spend their limited time on the tricky 20% of the review that actually requires their expertise.

By taking the tedious tasks off your plate, these tools make developers more valuable, not obsolete. You get to spend less time on frustrating back-and-forths and more time designing elegant solutions and building cool features.

How Do We Actually Measure the ROI on This?

Fair question. Any new tool has to justify its cost. With AI code review, the return on investment (ROI) isn't just a fuzzy feeling—it shows up in hard numbers that directly impact your delivery speed and bottom line.

You can track your success with concrete metrics:

- Faster Cycle Times: Measure the time from when a pull request is opened to when it gets merged. We see teams cut this metric in half because the instant feedback loop kills the endless PR ping-pong.

- Fewer Bugs in Production: Keep an eye on the number of bugs that escape to staging or, worse, production. When you catch issues right in the IDE, they never become expensive fires to put out later.

- Higher Developer Velocity: Look at the number of story points or features your team ships per sprint. When you remove the code review bottleneck, your team's output naturally goes up.

By tracking these numbers, you can draw a straight line from implementing an ai powered code review tool to accelerating development, shipping higher-quality code, and making your entire engineering organization more effective.

Ready to see how real-time AI review can transform your workflow? kluster.ai integrates directly into your IDE to catch errors, enforce standards, and secure code the moment it's written. Start free or book a demo to eliminate PR ping-pong and merge production-ready code in minutes.